- VMware Technology Network

- :

- Cloud & SDDC

- :

- ESXi

- :

- ESXi Discussions

- :

- HA Questions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

HA Questions

In a ESXi 5.5 Cluster if i have 4 ESXi 5.5 host and i specify "host failure cluster tolerates to 1"

what happens if 2 esxi host goes down ??

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

HA will try restart all VMs from failed host but if all VMs will be restart successfully, it depends of your slot size and the size of VMs from failed hosts.

Take a look on the first scenario of this post: Answering some admission control questions

And to read more about the Admission Control, take a look on this another post: HA Admission Control the basics – Part 2/2

Richardson Porto

Senior Infrastructure Specialist

LinkedIn: http://linkedin.com/in/richardsonporto

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You have to calculate the slot size before you specify the "host failure cluster tolerates "

What is a Slot?

A slot is a logical representation of the memory and CPU resources that satisfy the requirements for any powered-on virtual machine in the cluster.

In other words a slot size is the worst case CPU and Memory reservation scenario in a cluster. This directly leads to the first “gotcha”:

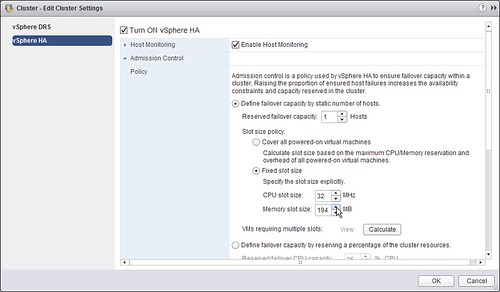

HA uses the highest CPU reservation of any given VM and the highest memory reservation of any given VM. With vSphere 4.1 if no reservations of higher than 256Mhz are set HA will use a default of 256Mhz for CPU and a default of 0MB+memory overhead for memory. With vSphere 5.0 the default for CPU has been brought down to 32Mhz.

If VM1 has 2GHZ and 1024MB reserved and VM2 has 1GHZ and 2048MB reserved the slot size for memory will be 2048MB+memory overhead and the slot size for CPU will be 2GHZ.

Basic design principle: Be really careful with reservations, if there’s no need to have them on a per VM basis don’t configure them.

By the way, did you know that with vSphere 5.1 and the Web Client you can specify fixed slot sizes in the UI? Nice right? Keep that in mind when you see some of the advanced settings in the next section, depending on the version you are running you could potentially just configure it in the UI.

How does HA calculate how many slots are available per host?

Of course we need to know what the slot size for memory and CPU is first. Then we divide the total available CPU resources of a host by the CPU slot size and the total available Memory Resources of a host by the memory slot size. This leaves us with a slot size for both memory and CPU. The most restrictive number is the amount of slots for this host. If you have 25 CPU slots but only 5 memory slots the amount of available slots for this host will be 5.

As you can see this can lead to very conservative consolidation ratios. With vSphere this is something that’s configurable. If you have just one VM with a really high reservation you can set the following advanced settings to lower the slot size being used during these calculations: das.slotCpuInMHz or das.slotMemInMB. The advanced setting das.slotCpuInMHz and das.slotMemInMB will allow you to specify an upper boundary for your slot size. When one of your VMs has an 8GB reservation this setting can be used to define for instance an upper boundary of 1GB to avoid resource wastage and an overly conservative slot size. However when for instance das.slotMemInMB is configured to 2048MB and the lowest reservation is 500MB then the slotsize for memory will be 500MB+memory overhead. If a lower boundary needs to be specified the advanced setting “das.vmMemoryMinMB” or ” das.vmCpuMinMHz” can be used. To avoid not being able to power on the VM with high reservations these VM will take up multiple slots. Keep in mind that pre-vSphere 4.1 when you were low on resources this could mean that you were not able to power-on this high reservation VM as resources would be fragmented throughout the cluster instead of located on a single host.

As of vSphere 4.1 HA is closely integrated with DRS. When a failover occurs HA will first check if there are resources available on that host for the failover. If resources are not available HA will ask DRS to accommodate for these where possible. HA, as of 4.1, will be able to request a defragmentation of resources to accommodate for this VMs resource requirements. How cool is that?! One thing to note though is that HA will request it, but a guarantee can still not be given so you should be cautious when it comes to resource fragmentation.

The following is an example of where resource fragmentation could lead to issues:

If you need to use a high reservation for either CPU or Memory these options (das.slotCpuInMHz or das.slotMemInMB) could definitely be useful, there is however something that you need to know. Check this diagram and see if you spot the problem, the das.slotMemInMB has been set to 1024MB.

Notice that the memory slot size has been set to 1024MB. VM24 has a 4GB reservation set. Because of this VM24 spans 4 slots. As you might have noticed none of the hosts has 4 slots left. Although in total there are enough slots available; they are fragmented and HA might not be able to actually boot VM24. Keep in mind that admission control does not take fragmentation of slots into account when slot sizes are manually defined with advanced settings. It does count 4 slots for VM24, but it will not verify the amount of available slots per host. As explained, as of vSphere 4.1 it will request defragmentation, but as stated… it can not be guaranteed.

Basic design principle: Avoid using advanced settings to decrease slot size as it might lead to more down time.

Another issue that needs to be discussed is “Unbalanced clusters”. Unbalanced would for instance be a cluster with 5 hosts of which one contains substantially more memory than the others. What would happen to the total amount of slots in a cluster of the following specs:

Five hosts, each host has 16GB of memory except for one host(esx5) which has recently been added and has 32GB of memory.

One of the VMs in this cluster has 4CPUs and 4GB of memory, because there are no reservations set the memory overhead of 325MB is being used to calculate the memory slot sizes. (It’s more restrictive than the CPU slot size.)

This results in 50 slots for esx01, esx02, esx03 and esx04. However, esx05 will have 100 slots available. Although this sounds great admission control rules the host out with the most slots as it takes the worst case scenario into account. In other words; end result: 200 slot cluster. With 5 hosts of 16GB, (5 x 50) – (1 x 50), the result would have been exactly the same. (Please keep in mind that this is just an example, this also goes for a CPU unbalanced cluster when CPU is most restrictive!)

Basic design principle: Balance your clusters when using admission control and be conservative with reservations as it leads to decreased consolidation ratios.

Go through the following article for more details. vSphere High Availability (HA) Technical Deepdive - Yellow Bricks