- VMware Technology Network

- :

- Cloud & SDDC

- :

- VMware Aria Automation Orchestrator

- :

- VMware Aria Automation Orchestrator Discussions

- :

- Virtual Machine Disks consolidation is needed

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi All,

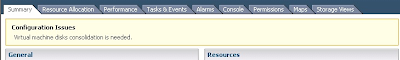

After removing the snapshots through orchestrator, login into the VI-client, the VM summary shows that "configuration Issues" "Virtual Machine disks consolidation is needed".

Could you guide me how to do consolidation through orchestrator.

"

var logtext;

if(activeSnapshot.config){

var vmName = activeSnapshot.config.name;

var snapshotID = activeSnapshot.id;

var task = activeSnapshot.removeSnapshot_Task(removeChildren);

//var task = activeSnapshot.removeSnapshot_Task(consolidate);

var actionResult = System.getModule("com.vmware.library.vc.basic").vim3WaitTaskEnd(task) ;

logtext = "The snapshot with the id " + snapshotID + " from the Virtual machine '"+vmName+"'has been removed.";

}else{

logtext = "The snapshot with the id " + activeSnapshot.id + " has already been removed.";

}

content = content + "<br>" + logtext;

System.log(logtext);"

Note:- The above code belongs to Remove snapshot code.

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I did some testing as we were looking at doing the same thing. It appears that the default workflow that VMware has included has a quirk compared to the documentation in its API documentation.

vCenter Server 5.1 Plug-In API Reference for vCenter Orchestrator

(Exerpt from documentation for vOrchestrator API 5.1.1)

===============================

removeSnapshot_Task

Removes this snapshot and deletes any associated storage.

Parameters

| Name | Type | Description |

|---|---|---|

| removeChildren | boolean | Flag to specify removal of the entire snapshot subtree. |

| consolidate* | boolean | (optional) If set to true, the virtual disk associated with this snapshot will be merged with other disk if possible. Defaults to true. |

*Need not be set

Return Value

| Type | Description |

|---|---|

| VcTask |

=================================================

The documentation tells us that the .removeSnapshot_Task function does not require the condolidate Snapshots parameter to be set to true, as it does it by default(This appears to be false, at least in this instance). When I added the underlined sections in the code below in the Schema item "Remove Snapshot" does not come up with the alert.

//Set to if you want to consolidate the disk

var consolidateSS = true

//Remove Snapshot

var task = activeSnapshot.removeSnapshot_Task(removeChildren,consolidateSS);

var actionResult = System.getModule("com.vmware.library.vc.basic").vim3WaitTaskEnd(task) ;

Please keep in mind that this should be tested in a test environment before deploying to production. I hope this helps you out and saves some headaches

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have not yet implemented this for myself but you may want to try vm.consolidateVMDisks_Task() where vm is the vm object.

Also, try vm.runtime.consolidationNeeded to detect it.

Hope this helps.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi

also consider to open a SR for that, I remember seeing this discussion somewhere in past, and you might have hit a bug in the vCenter Library in vCO.

Cheers,

Joerg

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

vSphere 5 introduced a new feature to clean up VM snapshot "left-overs" which could be the result of a snapshot removal action where the consolidation step has gone bad. This would result in the snapshot manager interface telling you there are no more snapshots present, but at datastore level they still exist and could even be still in use / still be growing. This could case all kinds of problems, first of all VM performance issues as the VM is still running in snapshot mode, secondly you are not able to alter the virtual disks of this VM and in the long run your could potentiality run out of space on your datastore because the snapshot keeps on growing.

Prior to vSphere 5 there was a possibility to fix such a situation thru the CLI, now with vSphere 5 you get a extra option in the VM - Snapshot menu called "Consolidate" this feature should clean up any discrepancies between the Snapshot Manager interface and the actual situation at datastore level.

I'm always a little reluctant when I'm at a customer and they use a lot of snapshotting, it is a very helpful and useful tool but you have to use it with caution otherwise it could cause big problems. Usually the problems start if snapshots are kept for a longer period of time or if the snapshot a layered onto each other, but even if you are aware of the problems it can cause when it's used wrongly it can still happen that you run into issues when using snapshots.

That being said and when we look at features offered by the various Vendors of storage devices, I'm pointing to the VM backup solutions that they offer. When a VM is running when being backed-up they all use the vSphere snapshot (with or without the Quiescing of the guest file system option). Basically if your company uses a SAN that leverages this functionality and it's configured to backup your VM's on a daily basis you have a environment that uses snapshotting a lot (on a daily basis) and therefore you could possibly run into more snapshot / consolidation issues then when you would not have a SAN with this functionality (nobody snapshots all it's VM's manually on a daily basis, I hope).

When I recently was at a large customer (+2000 VM's) that uses their storage device feature to backup complete datastores daily and also uses vSphere snapshots to get a consistent backup of running VM's.

For some reason they run into snapshot / consolidation issues pretty often and they explained to me that the Consolidate feature did work ok on VM with a Linux guest OS , but they almost always had a problem when trying to consolidate on a VM with a Windows guest OS it would simply fail with a error.

So I had a look at one of their VM's that could not consolidate although the vCenter client was telling it did need it.

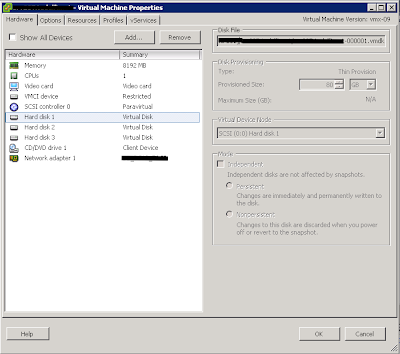

When looking at the VM properties I cloud see that it was still had a "snapshot" (delta file) as a virtual disk, as it had a -000001.vmdk as virtual disk instead of a "normal" .vmdk

When a VM is in this state and it is still operational there is a issue, but the uptime is not directly affected, but most of the time the VM will be down and it will not power on again because of the issue. It will simply report a error of missing a snapshot on which the disk is depending.

The way I solved it at this customer (multiple times) is by editing the VM's configuration file .vmx and re-registering the VM to vCenter and after manually cleanup the remaining snapshot files. Please note that if the VM was running in snapshot mode all changes written in the snapshot will be lost using this procedure, in other words the VM will return to it's "pre snapshot" situation. For this particular customer this was not a issue, because the failed snapshots where initiated for backup purposes so no changes where made to the VM when it ran in snapshot mode.

So if you run into this issue and you know that their where no changes made to the VM or the losing the changes is a acceptable loss you could solve it by these steps.

- Download the .vmx and open it with a text editor (I prefer Notepad++ for this kind of work) find the line that has the virtual disk files configured scsi0:0.fileName = "virtual-machine-000001.vmdk" and remove "-000001" so you are left with scsi0:0.fileName = "virtual-machine.vmdk" save the file.

- Rename the .vmx file on the datastore to .old and upload the edited .vmx file.

- Either reload the VM by using PowerCLI** or remove the VM from the Inventory and re-add it again.

- Power on the VM

- If you get the "Virtual Disk Consolidation needed" message, go to the Snapshot menu and click "Consolidate" it should run correctly now and remove the message.

- Manually remove the unused files from the datastore .old, -000001.vmdk and -000001-flat.vmdk (I use Winscp to do this kind of work on a datastore)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I did some testing as we were looking at doing the same thing. It appears that the default workflow that VMware has included has a quirk compared to the documentation in its API documentation.

vCenter Server 5.1 Plug-In API Reference for vCenter Orchestrator

(Exerpt from documentation for vOrchestrator API 5.1.1)

===============================

removeSnapshot_Task

Removes this snapshot and deletes any associated storage.

Parameters

| Name | Type | Description |

|---|---|---|

| removeChildren | boolean | Flag to specify removal of the entire snapshot subtree. |

| consolidate* | boolean | (optional) If set to true, the virtual disk associated with this snapshot will be merged with other disk if possible. Defaults to true. |

*Need not be set

Return Value

| Type | Description |

|---|---|

| VcTask |

=================================================

The documentation tells us that the .removeSnapshot_Task function does not require the condolidate Snapshots parameter to be set to true, as it does it by default(This appears to be false, at least in this instance). When I added the underlined sections in the code below in the Schema item "Remove Snapshot" does not come up with the alert.

//Set to if you want to consolidate the disk

var consolidateSS = true

//Remove Snapshot

var task = activeSnapshot.removeSnapshot_Task(removeChildren,consolidateSS);

var actionResult = System.getModule("com.vmware.library.vc.basic").vim3WaitTaskEnd(task) ;

Please keep in mind that this should be tested in a test environment before deploying to production. I hope this helps you out and saves some headaches

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I tested in my test environment and it worked. I appreciate all your effort and sharing the Knowledge to the orchestrator community.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

For what its worth guys.

I had the same issue as described above so i decided to try clone the vm to make it a bit easier if it all went south. I didn't expect it to work if im honest as im sure the clone uses snap deltas in order to copy the vmdk. However not only did the clone work but it also cleared the original problem! all i can think is at the end of the clone process both the deltas from the clone and from the overnight snap were played back into the original vmdks? maybe someone with a better understanding can clarify? anyway net result - working server, no snap deltas and no cofig issues.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Simple solution. Create a snapshot for VM with the alert, then delete\consolidate all snapshots in Snapshot Manager.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks King_Robert!!!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks MrCraig. It really is a simple and elegant solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, THANK YOU MrCraig! This was a very smooth and simple solution that worked like a charm!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It's wonderful! I was searching for a solution to the same issue and it's a really simple one.

Thanks MrCraig

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Worked! Thanks MrCraig.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Exactly what i was going to offer ![]()

No need for editing VMX files and other "fireworks"

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi

I'm new to VMware - am i able to do a Snapshot of the VM while it is powered on and during the day while its being used ?

Thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes you can .. but once the removal operation is started you can't do any thing else

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello:

I have the similar problem but I can not consolidate because the datastore is out of Space.

when I try to consolidate it shows me this error

An error occurred while consolidating disks: msg.disklib.NOSPACE.

I have cloned the VM to another DataStore that is has a little bit more space (just temporary) so I am going to test if this will work.

I have few questions. the Original VM is up and runing propertly... but I have some questions.

1º Can I move the original VM machine to another Datastore with more space an them consolidate? or this is very risky?

2º can I still use the actual VM without consolidate untill I buy new Datastore and the move to new dataStore and consolidate? or maybe clone the VM to new datastore and them remove the old VM?

3º If the cloned version works like the original VM machine, I supposed that I can move the clone version to production and remove the unconsolidated VM this original DataStore?

Kind Regards,

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Please find you Answers below:

1º Can I move the original VM machine to another Datastore with more space an them consolidate? or this is very risky? YES you can, just do a svmotion.

2º can I still use the actual VM without consolidate untill I buy new Datastore and the move to new dataStore and consolidate? or maybe clone the VM to new datastore and them remove the old VM?--- it is a risk to run the VM which needs consolidation, this is nothing but a snapshot disk which is using space from your DS and since your DS is not having space i would recomment to either clone or move it accross.

3º If the cloned version works like the original VM machine, I supposed that I can move the clone version to production and remove the unconsolidated VM this original DataStore?--- I thin when you move a VM using svmotion to another DS having disk's to be consolidated after the move is complete there will be no disk consolidation required.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Vary helpful, thanks. Snapshot deleting failed following a VMware tools and Hardware update being applied on 5.5.3. Used the Consolidate option, which put the -vmdk back to a normal vmdk, left the snapshot file behind so then did a fresh snapshot followed by a delete all snapshots and the file left behind was removed.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks King_RobertKing_Robert ![]() , This is worked for me.

, This is worked for me.