- VMware Technology Network

- :

- Cloud & SDDC

- :

- ESXi

- :

- ESXi Discussions

- :

- ESXi 5.1 Purple screen

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

ESXi 5.1 Purple screen

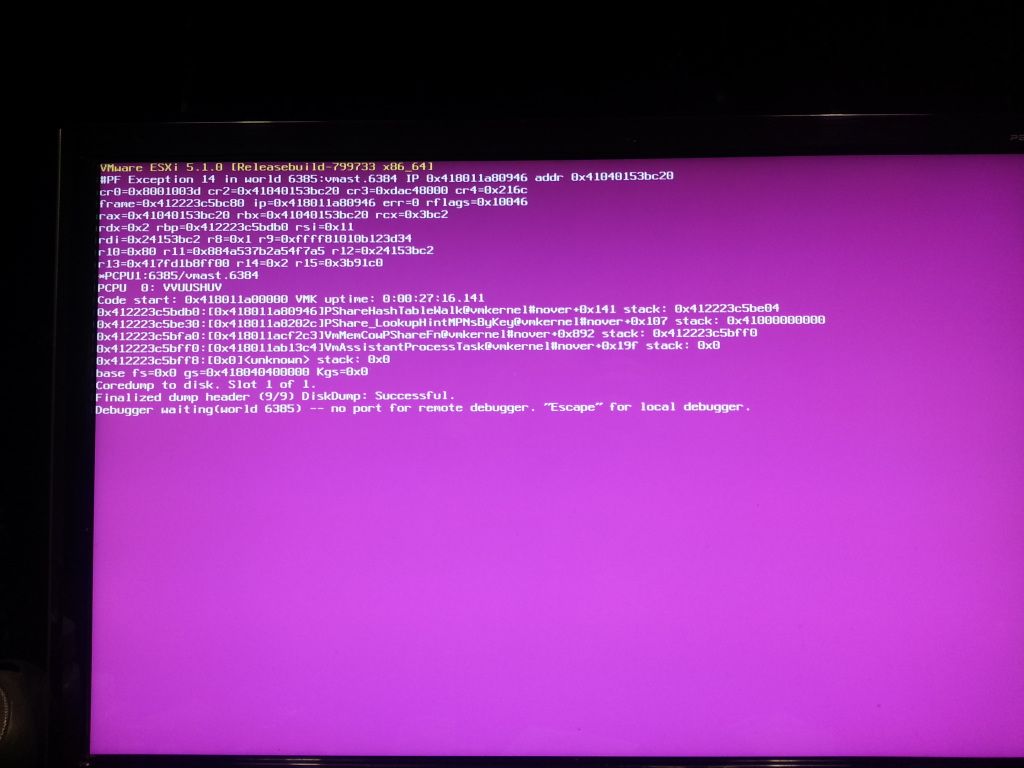

I have recently just upgreaded my host from 5.0 U1 to 5.1. I am in the process of updating the vmware tools in my VM's. While trying to do so the host keeps crashing to a purple screen. Prior to the update the system has been working just fine. here is a pic of the purple screen.

Host system specs are as follows.

Gigabyte z77x-UD3H

i7-3770s

M1015 raid card (DataStore)

If any more info is needed please let me know.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

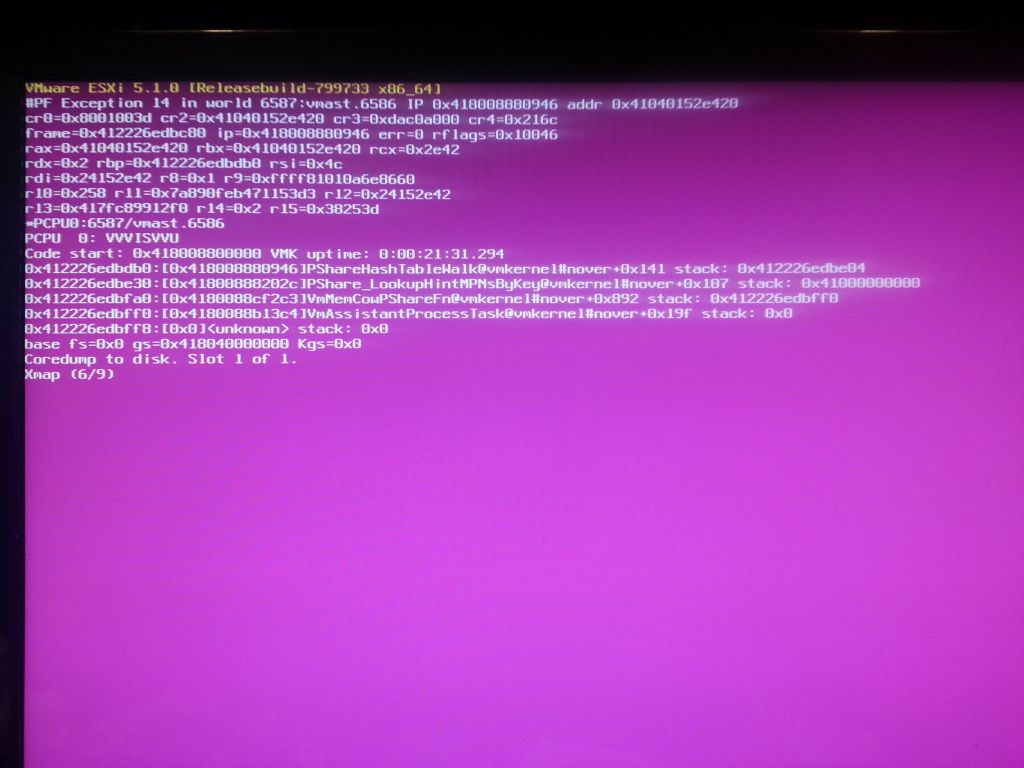

Crashed again......

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Please upload the PSOD dump.

Also have a look at the below KB

vRNI TPM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi karthickvm,

Thank you for your reply. How do I go about getting you that log?

I have just complete 1 full pass of memtest just to make sure no problems with ram. Again the system was working just fine untill I did a update to 5.1. Now I did have to make a custom install with my lan drivers along with LSI drviers for raid card. Which I did for 5.0 u1 and had no issues.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

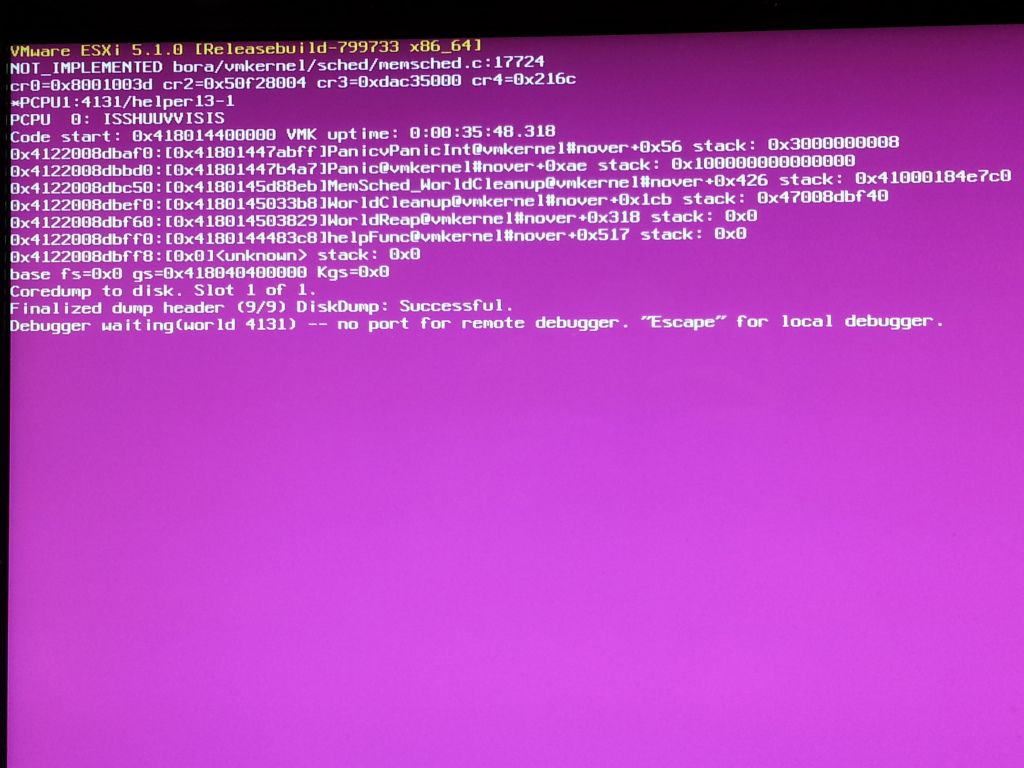

Another crash this Host was just idle along with vm's, was not upgrading VM tools'.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have since tried doing a fresh install of 5.1 and had the system crash while idle and no vms even loaded into host from datastore.

I have since moved back to 5.0 U1 and the system is back stable running 8 vm's.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am having similar issue on HP BL620c G7 blade. Have upgraded all my BL460c G7 ones without any issue using the "VMware-ESXi-5.1.0-799733-HP-5.30.28" from vMware downloads.

Anybody faced same issue on HP BL620c G7 ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The Exception 14 PSOD has typically been, in my experience, caused by the VAAI Offload function. I've usually seen it in a View environment, and in a hardware configuration using an HP EVA series SAN with any of the newer firmware revisions (anything starting with a 1, such as 10001000 or 11001000). The older firmware revisions don't support VAAI offload, so no PSOD when using them.

There isn't a lot of detail about your hardware or software configurations, but this might be at least a starting point - you're likely looking for latency issues with your SAN, and likely are in some fashion using the VAAI offload function.

You can disable VAAI offload on each host as a troubleshooting step:

Hopefully this helps...

Jes

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I hame similar problem when updating to esxi 5.1. I run 8 VM masjine and one of them is ROUER OS.

Strange situation : when server restart and starting mv mashine ( routerOS not starting) evetryhing working.But when I starting routeros (miktorik) masjine it start and when turn off it show 90% and esxi show purple screen....

Any ideas why it not working with routeros ? with 5.0.1U there was no problem,evetryhing working well...Atacht pic.

Another problem : I rolback to esxi 5 version and when esxi start my virtual mashine was showing as unkwon.I delete from inventory and test to add to inventory,but when was add I can change configuration.. I guest that is problem ,that my mv masihine is v9 version,not 8 and esxi5 is not working with 9 version. Then I update again to 5.1 version and sucesfully add to inventory.

I can't find any info why is not working with routeros.....any solution ?

Another problem with esxi 5.1 are :

Device mpx.vmhba2:C0:T1:L0 performance has

deteriorated. I/O latency increased from average

value of 2161 microseconds to 65114

microseconds.

warning

2012-09-22 03:51:43

Lost access to volume

4e97d0df-702389fa-cec2-0090fb17ba6a (T2) due

to connectivity issues. Recovery attempt is in

progress and outcome will be reported shortly.

info

2012-09-22 09:56:21

T2

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have the exact same issue. Mine happens when I boot turnkey linux distributions on 5.1.

My process for adding the turnkey machines was making a new Debian 6 32bit vm with no hard drive. Then dumping the vmdk in the folder. Then adding it as the hard drive to the virtual machine. These have worked fine in 5.0 for a long time.

Upon upgrading to 5.1, I can start them, they fail to connect to console. Then, they freeze at 95%. Shortly thereafter, I get a purple screen exception 14.

I tried upgrading vm hardware to v9, but ran into the same problem as the previous poster (recreating all my vms) after I had to roll back.

I wish I had a resolution other than roll back!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Gezura,

It looks not only affects the RouterOS. I'm using pfSense 2.0.1. ESXi 5.1 pops the purple screen every single time I shutdown or restart the Software Router VM. Have you found any solutions yet beside rolling back to 5.0.1?

Many thanks

Netware00

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have two pfsense 64bit vm's in addition to my turnkey linux machines. It could be that they are the cause of the purple screen as well.

How many vnics do you have attached to your router os's (pfsense?)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am using one extra NIC Intel EXPI9402PTBLK Dual Port Gigabit Copper NIC. the ESXi5.1 is build with 1 * Boardcom NIC (on board), and 2 * Intel NIC from Intel EXPI9402PTBLK Dual Port Gigabit Copper NIC. Tried the HP custom VM image, not helping. Change the WAN LAN DMZ on different NICs, also negative.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Anyone have a resolution to this?

I have a custom host with a Tyan motherboard. Seems to not be a hardware dependant issue.

I have two pfSense machines running. One has 2 vnics (wan and lan), and the other has 4 vnics. They share physical nics.

They work great in 5.0. In 5.1, they cause a purple screen.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Are you using any passthrough devices?

See KB article below;

ESXi 5.1 host fails with a purple diagnostic screen when powering on a virtual machine with a PCI passthru device

Symptoms

ESXi 5.1 host fails with a purple diagnostic screen when a virtual machine configured with a PCI passthru device is powered on

Resolution

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

My issue ended up being the TurnKey Linux machines.

For whatever reason, when they were imported, the harddrives were setup for IDE. I didn't notice any performance issues at the time because they were all very small virtual machines.

After backing everything up, I converted the vmdk files to lsilogic adapters instead of IDE. Then, I reattached the hard drives. After this, not only did they work without purple screening the host, they work a lot faster. I did not change the geometry of the drives yet.

Mine was probably an isolated case due to improperly importing vmdk files in the first place.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Are you using the HP ESXi build? or the native ESXi, if the latter, down load HP's and use that, it will include the necessay VIB's to run on those machines

VMware Communities User Moderator

Blog: http://www.planetvm.net

Contributing author on VMware vSphere and Virtual Infrastructure Security: Securing ESX and the Virtual Environment

Contributing author on VCP VMware Certified Professional on VSphere 4 Study Guide: Exam VCP-410