- VMware Technology Network

- :

- Cloud & SDDC

- :

- vSAN

- :

- VMware vSAN Discussions

- :

- Re: unexplained high write latencies

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

unexplained high write latencies

Hello everybody!

I have a little problem and need your help with it 😉

We`re running a small 2-node vsan Metro Cluster distributed over 2 datacenters with a virtual witness host for our dedicated dmz. The vsan Cluster was setup in a classical hybrid fashion. Now, I`ve observed relatively high write latencies (up to 50ms) on almost all vm`s or at least on them which issue a moderate amount of parallel io`s. I tried to delimit the issue by monitoring the different stack latencies. This means, from a host perspective, we do not have congestions on the vmkernel layer, the storage Controller and neither on the Cache disks. No high latencies were found there. So the issue has to be be between vmkernel and guest os. Then my guess was, it must be the network since all writes gets mirrored to the other host. The Problem is, there is no Counter that I know which would show the replication latency in a vsan environment. So I tried to test it using the esxcli Network diag command and did some pings with 4k sizes and the roundtrip time is at about 1 ms. So also nothing found here. Do you guys have any idea or did I overlooked something?

Thanks & Regards

Manuel

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Good morning, I'm wondering if you are having a high cache miss ratio. Do you have the stat? Thank you, Zach.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Manuel,

is the average latency 50ms or the maximum? What workload and tool do you use? You can monitor the VSAN 6.2 performance tab for more information. Check latency on different levels (VSAN Client/ VSAN Backend/ Diskgroup level). Hope you have the latest release VMware ESXi 6.0 Patch Release ESXi600-201608001. If not, you should correct that first.

If you have a few VMs only you can try to use striping objects. If you want to optimize performance even more you can disable checksum.

BR

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi zdickinson

I didn`t really investigated the reads until now cause we have the highest latencies on writes.

Regards

Manuel

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

hi vtonev

it is or was the average. I do not use a tool at the Moment, its the workload from our Servers. The backend Looks mostly very good, it is just the frontend with high latencies. But I also see delayed IOs sometimes on the disk Group which Looks like the vsan stack has a Problem with issuing the iops fast.

Regards

Manuel

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I just took a look at the vsan esxtop view. There is not much going on at the Moment, but if you look at the owner column, there was a latency of over 18ms for this few writes:

And am I right with the hypothesis, that the "Client", "Owner", CompMgr" and device latency combined is the one that the vm observes?

Regards

Manuel

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

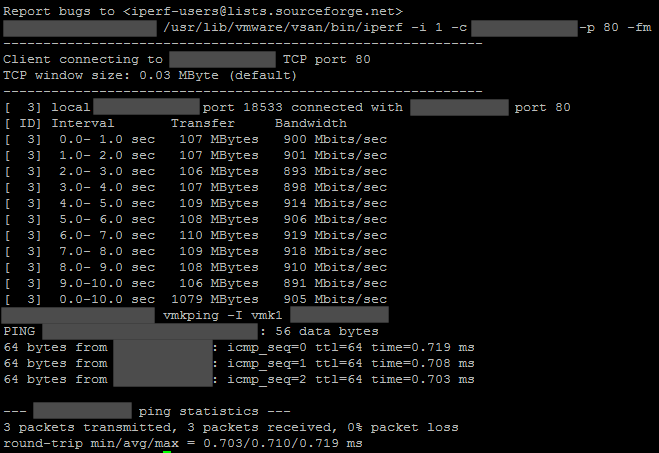

Your latency is probably in the network but not only there. The latency on the device is 0.6ms which is ok. So test your bandwidth and latency.

on host1# /usr/lib/vmware/vsan/bin/iperf.copy -s -B vsanIP1 -p 80

on host2# /usr/lib/vmware/vsan/bin/iperf -i 1 -c vsanIP1 -p 80 -fM

on host2# vmkping -I vmk1 vsanIP1

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi vtones

thanks for your response. I will do this test tomorrow since here in Switzerland it is late in the evening now 😉

But what I did already, was to set up a ping with a 4k payload from one host to the other and I had a roundtrip time of about 1ms. But I will do your tests anyway.

Just for my understanding: The "compmgr" isn`t really the device latency, isn`t it? All 3 of these roles combined in addition to the device/kernel latency (gavg at esxtop) are the latency that the vm`s observe? And if I would set the failures to tolerate to 0 and move the vm to the host where the vmdk resides, it would be possible to exclude the network to test the performance right?

Regards

Manuel

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey vtones

I just did your tests:

At the Moment we have just 1 Gbit/s into the second RZ on this Network, but we will have 2 Gbit/s in a while. But I think this should be enough for this few Servers and the roundtrip time is also low.

Then I took another look at esxtop:

As you can see, we have incredibly high latencies at the whole DOM. Even though we have more than enough Server ressources... Do you have any other ideas where the latencies are coming from?

Regards

Manuel

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I just took a look at one of the vsan traces log file where I found several entries like this one:

DOMTraceOperationNeedsRetry:3630: {'status': 'VMK_STORAGE_RETRY_OPERATION', 'objUuid': '7317bf57-3e75-a0d3-5e08-ecb1d7b22c78', 'obj': '0x43a5c24428c0', 'op': '0x43a5b0851c80'}

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Manuel,

what is your policy? did you try disabling checksum?

BR

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Check if you have enough memory available on the host and see if there is any congestion on the client level. The policy should be compliant.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey vtonev

I just disabled it. It doesn`t look much better at the moment but I will continue to Monitor it. The vm`s are all compliant.

Following the resource usage of the Cluster:

Should not be the bottleneck. With Client you mean the vm`s?

Regards

Manuel

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

probably checksum was re-calculated in the background. monitor it, when you turn it is back on, the whole checksum data needs to be calculated again. I assume this process was triggered in your case and the latency on the client side increased. You can try that with creating new VMs with checksum on by default, this new VM should have no performance impact.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi vtonev

it is deadtivated at the moment, but we observe the same high latencies as before... Any more ideas? :smileyshocked:

Regards

Manuel

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Not many.

1.Maybe check on the VM level in esxtop "v", create new VM and monitor the write latecy for this particular VM.

2.support request at GSS.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

1- I recommend using vsan observer to look at the whole picture.

2- Check to make sure the host are not having the read cache hit miss issue (seen it a lot lately)

how to check - http://wp.me/p4PIqD-ez

3- Highly recommended to go to P3 version as it fixes a lot of bugs

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Okay, thank you anyway for your help!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi dvdmorera

I tried it with vsan observer, but didn`t see something Special there...

But thanks for the link, i just checked it and it was enabled. Just turned it off, but doesn`t look much better at the Moment. I will continue to Monitor it.

Regards

Manuel