- VMware Technology Network

- :

- Networking

- :

- VMware NSX

- :

- VMware NSX Discussions

- :

- Re: NSX Upgrade Issue

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

NSX Upgrade Issue

Hi,

I've upgraded NSX Manager from 6.3.3 build 6276725 to 6.3.3 build 7087283. After the upgrade of NSX Manager controllers disappeared from NSX Controller nodes in Networking & Security. When I login to NSX Manager via SSH and issue show controller list all command all three controllers are listed but the state is UNKNOWN. I tried to delete one controller using the API with DELETE /api/2.0/vdn/controller/{controllerId} method and after that it's in the REMOVING state and I had to manually delete it from vCenter even though I tried to force delete it via API. Nevertheless the NSX deployment seams to be functioning with 2 controllers in UNKNOWN and one in REMOVING states. Any ideas?

Thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can you please share the output of "show control-cluster status" ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

For Sure

VMware NSX Controller 6.3.3 Build (6235594)

nsx-controller # show control-cluster status

Type Status Since

--------------------------------------------------------------------------------

Join status: Join complete 10/26 15:33:48

Majority status: Connected to cluster majority 10/26 15:33:31

Restart status: This controller can be safely restarted 10/26 15:33:54

Cluster ID: 8891625e-d0a9-4960-8eb7-a145e3106da5

Node UUID: fb2f4dd5-6533-4f90-9631-43bbb286f469

Role Configured status Active status

--------------------------------------------------------------------------------

api_provider enabled activated

persistence_server enabled activated

switch_manager enabled activated

logical_manager enabled activated

directory_server enabled activated

----------------------------

Cluster status from vnet-controller:

----------------------------

Active cluster members

isMaster: true

uuid=a35b94e7-2770-470d-83c0-5e466ce442f7, ip=10.60.1.222

uuid=fb2f4dd5-6533-4f90-9631-43bbb286f469, ip=10.60.1.221

Configured cluster members

uuid=a35b94e7-2770-470d-83c0-5e466ce442f7, ip=10.60.1.222

uuid=fb2f4dd5-6533-4f90-9631-43bbb286f469, ip=10.60.1.221

uuid=75af2c80-cea9-4073-a504-e390b6180607, ip=10.60.1.223

----------------------------

controllerd.info

0000000: fb2f 4dd5 6533 4f90 9631 43bb b286 f469 ./M.e3O..1C....i

0000010: 0d00 0000 0000 0000 0300 0000 0000 0000 ................

0000020: 7a6b 636c 6973 3000 0031 302e 3630 2e31 zkclis0..10.60.1

0000030: 2e32 3231 3a37 3737 372c 3130 2e36 302e .221:7777,10.60.

0000040: 312e 3232 323a 3737 3737 2c31 302e 3630 1.222:7777,10.60

0000050: 2e31 2e32 3233 3a37 3737 3700 0000 0000 .1.223:7777.....

0000060: 0000 0000 0000 0000 0000 7a6b 636c 6964 ..........zkclid

0000070: 0000 0038 3839 3136 3235 652d 6430 6139 ...8891625e-d0a9

0000080: 2d34 3936 302d 3865 6237 2d61 3134 3565 -4960-8eb7-a145e

0000090: 3331 3036 6461 3500 0000 0000 0000 0000 3106da5.........

00000a0: 0000 0000 0000 0000 0000 0000 0000 0000 ................

00000b0: 0000 0000 7a6b 7376 3000 0000 0031 302e ....zksv0....10.

00000c0: 3630 2e31 2e32 3231 3a37 3737 372c 3130 60.1.221:7777,10

00000d0: 2e36 302e 312e 3232 333a 3737 3737 2c31 .60.1.223:7777,1

00000e0: 302e 3630 2e31 2e32 3232 3a37 3737 3700 0.60.1.222:7777.

00000f0: 0000 0000 0000 0000 0000 0000 0000 ..............

----------------------------

/var/log is writable: True

/var/cloudnet/data is writable: True

/var/cloudnet/cluster is writable: True

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi

Did you changed controller's admin password manually? If this is the case then change it back. You might need to access Controller's engineering mode in order to do so. Here's a very useful blog post .

If this happens in production environment, my suggesstion is open a support case.

If this happens in a test environment, you can check if it's due to controller password exipire.

Check if the following VMware KB article helps: VMware Knowledge Base

Please consider marking this answer "correct" or "helpful" if you think your question have been answered correctly.

Regards,

Min Zhang

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi dyadin,

Thanks for your response. I didn't change the password for controllers' manually. I've already tried applying the script in the KB you referred me to but to no avail. In the blog post it's said that to enter engineering mode issue debug os-shell command. But on the controller when I enter this command I get ERROR: Invalid command or argument. Valid values are: so I'm unable to enter this mode.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

the command should be : debug os-shell , there's a colon before "debug"

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Also my suggestion is reboot all three controller nodes to see if the connection state will turn to Connected.

What's the status of three controllers now? The removing process still appears?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Neither this command is recognized.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

There's a SPACE between : and debug

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

In vSphere Web Client the controllers do not show up at all. If I login to NSX Manager using SSH and issue show controller list all only 2 controllers are listed and the state is UNKNOWN

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Have you tried to reboot controllers?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for assistance.I've finally managed to spell the command correctly ![]() . Then when it asked me to provide password I entered the one I'd obtained from NSX Manager using /home/secureall/secureall/sem/WEB-INF/classes/GetNvpApiPassword.sh controller-nn command. Account is expired. I'm confused on what to do next, afraid not to blow up the system completely

. Then when it asked me to provide password I entered the one I'd obtained from NSX Manager using /home/secureall/secureall/sem/WEB-INF/classes/GetNvpApiPassword.sh controller-nn command. Account is expired. I'm confused on what to do next, afraid not to blow up the system completely

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Both of your problems happened in mine and my customer's environment.

1st, after upgrading NSX manager, controller status became Unknown, I fix it via reboot .(NSX 6.3.1 to NSX 6.3.2)

2nd, In NSX 6.3.3 one controller became disconnected, rebooting can't solve the probem, so I tried to delete controller but failed, controller vm is still there but in vCenter>network&security it's gone.. I have to delete that controller manually from inventory. And then I tried to add the third controller, but it failed because of a bug (refer to KB 51144 ), I fixed it via Re: Deploying NSX Controller 6.3.3 - no ip assigned

The reason I told you to access controllers because I assume you changed controller's admin password manually (well, I did it in 6.3.3 due to password expires). Because if you change controller's admin password , controller's status also became UNKNOWN.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I tried procedure in KB51144. The first script applied successfully but when issued the second POST I got 200OK but in the Body of the Response from the API I got the following:

# of controllers: 2

------------------------------------------------------------------

Fix controller: {Controller: 10.60.1.222 [controller-8], apiUser: admin }

live prompt: <Password: >, SEND reply: <??>

live prompt: <Password: >, SEND reply: <??>

live prompt: <Password: >, SEND reply: <??>

live prompt: <Password: >, SEND reply: <??>

live prompt: <Password: >, SEND reply: <??>

ERROR in connect: SSH_MSG_DISCONNECT: 2 Too many authentication failures

script failed: com.jcraft.jsch.JSchException: SSH_MSG_DISCONNECT: 2 Too many authentication failures

------------------------------------------------------------------

Fix controller: {Controller: 10.60.1.221 [controller-7], apiUser: admin }

live prompt: <Password: >, SEND reply: <??>

live prompt: <Password: >, SEND reply: <??>

live prompt: <Password: >, SEND reply: <??>

live prompt: <Password: >, SEND reply: <??>

live prompt: <Password: >, SEND reply: <??>

ERROR in connect: SSH_MSG_DISCONNECT: 2 Too many authentication failures

script failed: com.jcraft.jsch.JSchException: SSH_MSG_DISCONNECT: 2 Too many authentication failures

Patch script completed successfully.

My guess is NSX Manager's admin user password for the controllers differs from actual admin user password on the controllers, hence authentication fails. How can I change the admin user's password to match on both devices

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Let me take an example:

Say I have a NSX 6.3.3 which deployed in 2017, at the beginning I set controller cluster admin password : VMware,1234

Due to KB 51144, 6.3.3 has a bug and will automatically expired admin, if ANYONE try to login controller, it'll shows your password is expired, you need to reset it, and that SOMEONE think it's ok to change the admin password while it's not. He changed VMware,1234 into VMware1! (of course he didn't tell you that). But for NSX manager, it doesn't know that the new password for admin is VMware1!, it still connect with VMware,1234, so the Unknow issue appeared.

In order to solve this, you need to enter engineering mode, use comand passwd admin to change admin's password back to it's original VMware,1234.

And you'll need to the same for root user, just change it if it expired and changed it back.

To make sure the above never happens again, use passwd -x 99999 root and passwd -x 99999 admin to set password never expires.

After all that, you can try to deploy the third controller or just re-deploy all of them one by one.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

dyadin,

Thank you so much for the assistance. After changing controllers' passwords I was able to apply the second script successfully:

# of controllers: 2

------------------------------------------------------------------

Fix controller: {Controller: 10.60.1.222 [controller-8], apiUser: admin }

Open SSH session, channel, input, output streams

login as root...

root access: OK

run password patch ...

run certificate patch ..

Close SSH session & channel

Controller: 10.60.1.222 fixed.

------------------------------------------------------------------

Fix controller: {Controller: 10.60.1.221 [controller-7], apiUser: admin }

Open SSH session, channel, input, output streams

login as root...

root access: OK

run password patch ...

run certificate patch ..

Close SSH session & channel

Controller: 10.60.1.221 fixed.

Patch script completed successfully.

However from NSX Manager controllers now are in SERVICE_UNAVAILABLE state ![]()

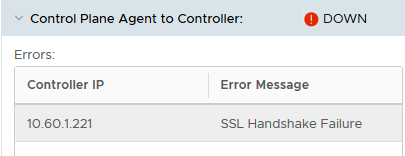

And communication between ESXi hosts' agents and controllers fail as well

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Seems like it's another bug... check these out :

Control Plane Agent to Controller Down

https://captainvops.com/2018/06/12/nsx-ssl-certificate-failure-on-esxi-ssl-handshake-failed/