- VMware Technology Network

- :

- Networking

- :

- VMware NSX

- :

- VMware NSX Discussions

- :

- Re: DLR will not forward traffic

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

DLR will not forward traffic

Have a strange issue. We have DLR with multiple networks attached to it that has decided to stop forwarding traffic.

All of the configured networks can ping the DLR.

If you run a trace to something like 8.8.8.8 the DLR responds destination unreachable.

From DLR, we can ping the next hop ESG.

"show ip route" reports all of the expected routes.

Now for the really strange part, any VMs on the same host as the DLR will work as expected and traverse the network.

VMs on other hosts can only ping the internal interface of the DLR.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I just ran another test with continuous pings to the internet.

As I migrated the VM on the DLR host, the pings would work.

Migrated the VM to another other host in the cluster, failed.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Running a ping from VM to Gateway on DLR is getting response from kernel module. Kindly check the below things in your env

1. Controller cluster status

2. VTEP connectivity.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Try redeploying the DLR and see if this resolves your issue.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Nope. We found the issue. The network team upgraded to MLAGs and we migrated the ESXi hosts to those MLAGs. Apparently, NSX doesn't take to kindly to this and is still trying to use the uplinks instead of the lag group.

Has anyone tried changing the NSX config through the API for this?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Any kind of LAG configurations for Edges is not recommended . This is already mentioned in https://www.vmware.com/content/dam/digitalmarketing/vmware/en/pdf/products/nsx/vmw-nsx-network-virtu...

Sree | VCIX-5X| VCAP-5X| VExpert 7x|Cisco Certified Specialist

Please KUDO helpful posts and mark the thread as solved if answered

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It was working fine and all NSX tests pass - All green on all status pages, VTEP pings, etc.

This is specifically with the logical routers on each host. They have the right route, but they are not working together. As if each NETCAPD is unaware of its peers (but shows them through NET-VDR).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello Dr.Virt,

I am also facing the same exact problem. Did you find a solution or workaround?

I have 4 ESXi (6.7 U1) hosts in the vCenter. vSAN is configured. NSX 6.4.4 is deployed.

Thank you,

Ravi

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

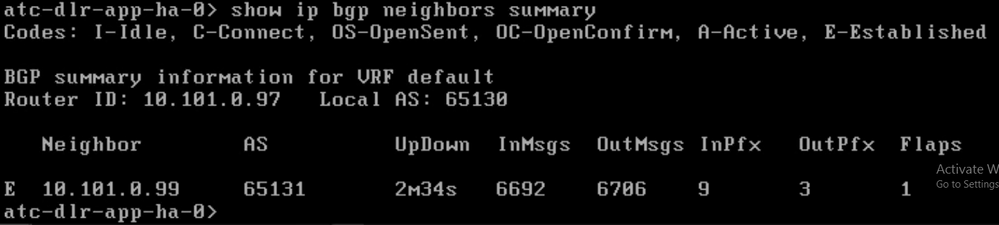

Are you using eBGP between DLR and ESG ?

Check if there is established connection between DLR and ESG:

Command: show ip bgp neighbors summary

In my case 10.56.41.252 and 253 are ESG routers which forward packets outside NSX.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello KocPawel,

Thank you for the response.

I deployed DLR control VM in HA mode. There is eBGP between DLR and ESG.

There is iBGP between the two top of the rack switches. 65200 is their AS number.

When I do a trace route to the virtual machine, the packet reaches the DLR transit uplink IP 10.101.0.97

ESG downlink interface IP is 10.101.0.99

From the VM, I can ping to all the gateways, but I cannot ping to the VMs connected to those gateways.

There were few issues with the driver and firmware of the NIC. When ever, I rebooted the ESXi host, it used to undergo a PSOD and when the ESXi host was reset, the management network was down. I had to restart the management network. I resolved both the issues today, by upgrading the firmware and drivers as per the followowing HPE advisory.

I have 4 ESXi hosts (6.7 U1)

NSX is 6.4.4

This is a new build. I can rebuild the DLR and ESG's, if required.

https://kb.vmware.com/s/article/2137005

https://kb.vmware.com/s/article/2133816

https://kb.vmware.com/s/article/2137011

Please help.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello KocPawel,

It seems that NIC driver and firmware that came with the server were the culprits. As mentioned in my previous note, I followed the following articles and upgraded the fw and NIC driver.

Later, I performed the following. Following steps are not recommended in production environment. Since I was deploying it, I had the luxury to perform these steps

1. Removed the ESG, DLR, Logical switches

2. Reset segment ids Deleted the transport zone

3. Uninstalled NSX in host preparation tab

4. Rebooted the ESXi hosts

5. Installed NSX and configured VXLAN in host preparation tab

3. Created segment ids and created the transport zone

5. Created logical switches, DLR and ESG

Finally every thing is working now.

Thank you,

Ravi