- VMware Technology Network

- :

- Cloud & SDDC

- :

- VMware Cloud Foundation

- :

- VMware Cloud Foundation Discussions

- :

- Re: the reason why VMs failed to migrate to DVS

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'm currently deploying VCF4.1 on four server nodes and just encountered a failure during the bring-up process , as shown in figure below.

obviously, migrating VMs to vsphere distributed switch failed.

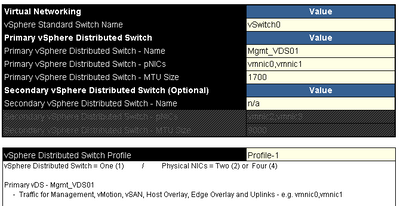

Here's my infrastructure settings:

1. using different VLAN IDs for each portgroup/vmkernel. The VLAN 1021 is utilized for (ESXi) management network and the cloud builder appliance is connected to this network.

2. only two vmnic are utilized for the bring-up process, as shown in figure below. I guess two NICs will be migrated to DVS eventually.

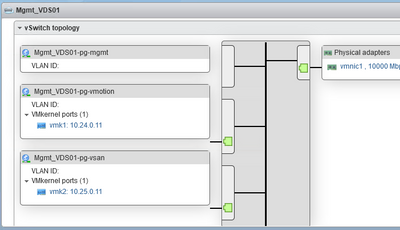

3. The default vSwitch0 has already existed on each ESXi hosts with VLAN portgroups working, as shown in figure below.

I did examine the configurations of the new DVS(which is created by Cloud Builder bring-up process) via the ESXi host webpage and discover that no VLAN IDs exist on any portgroups, as shown in figure below.

The validation of configuration did pass before entering the bring-up deployment in the Cloud Builder.

I assume that the deployment of VCF from the cloud builder should be able to configure VLAN IDs on each new DVS portgroups but it seemed not.

Does anyone know how to deal with VLAN ID issue of DVS portgroup in the deployment stage? or maybe there's other reasons that caused this failure ?

Thanks in advance!

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@CyberNilsthanks for the prompt. The logs in cloud builder appliance didn't help much but still valuable.

in the end I solved this problem by cleaning up the ESXi hosts and settings, migrate the cloud builder VM to independent environment and retry the bring-up process. everything went just fine eventually!

So the verdict is, do not deploy cloud builder appliance on the target management domain ESXi host otherwise the bring-up might fail due to the incomplete operations of VM/portgroups.

It sounds weird but that's the only clue i have to make this conclusion.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sorry, I haven't had this issue before, but please take a look at the log files to get more details on why this is failing:

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@CyberNilsthanks for the prompt. The logs in cloud builder appliance didn't help much but still valuable.

in the end I solved this problem by cleaning up the ESXi hosts and settings, migrate the cloud builder VM to independent environment and retry the bring-up process. everything went just fine eventually!

So the verdict is, do not deploy cloud builder appliance on the target management domain ESXi host otherwise the bring-up might fail due to the incomplete operations of VM/portgroups.

It sounds weird but that's the only clue i have to make this conclusion.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @nblr06 I am experiencing the same issue (in CB3.10 but same failure point and same symptoms).

Can I ask: when you rebuilt your hosts, did you use VIA, or another method? If another method, did you keep vmk0 of each host on a port group that has vlan1021 defined, or was vlan1021 untagged on the connection to the switch before you started SDDC Deploy? I've seen VIA uses untagged VLAN for provisioning and management initially so wondered if that was related...

Thanks!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

As long as there are no VM's in powered on state, you can use the CBVM in the target host as well. We deploy CBVM regularly on one of the four target hosts and we don't encounter this problem. Glad you fixed this yourself. In the next attempt if you are able to re-create this, I could take a look at it.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

hi @kivlintom

Answering your questions:

1. i use manual installation of ESXi to rebuild the VCF, didn't use auto-installation such as VIA or PXE because I believe manual installation is controllable.

2. the VLAN i utilized for the ESXi management was tagged(1021) and the physical switch's ports are all trunk. the vlan ID of management was set on the portgroup, it's not untagged.

if you are using a specific VLAN ID for the esxi management network then the portgroup should be configured with that ID otherwise networking issues will trouble you.

i guess that the VIA(or pxe boot) often suggests admins to use untagged vlan id for the deployment, however, this situation usually doesn't meet admins' requirement. But you can still set the specific VLAN ID for your ESXi management beforehand.

I skipped the VIA part of VCF deployment so not sure about it please refer to the official document. It worth reading.

hope this helps.