- VMware Technology Network

- :

- Cloud & SDDC

- :

- VMware Technology Network Knowledge Base

- :

- Cloud & SDDC Knowledge Base

- :

- Disaster Recovery Knowledge Base

- :

- Site Recovery Manager Documents

- :

- VMware Site Recovery Manager 5 on IBM Storwize v70...

- Article History

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Feature this Article

VMware Site Recovery Manager 5 on IBM Storwize v7000

VMware Site Recovery Manager 5 on IBM Storwize v7000

The environment:

2x vCenter servers (as VMs) in Linked Mode with SRM SW installed.

Clustered ESXi hosts and number of protected VMs …

vCenter Server 5.1 U2

ESXi hosts 5.1 U2

SRM server 5.1.2

SAN

IBM Storwize v7000 (Hosts accessing storage via switched FC fabric)

SRA version: IBM Storwize family SRA 2.3.0

Current software level: Version 7.2.0.6 (build 87.9.1404290000)

Verify that you have at least 6.4.x storage microcode in place:

http://www.vmware.com/resources/compatibility/SRA

Concept schema:

Picture from: IBM Storwize Family Storage Replication Adapter Version 2.3.0 User Guide

The SETUP

(Setup steps listed below are mainly focused to "Non-Preconfigured environment" SRA option but most of them applies to preconfigured env. as well)

To clarify IBM semantic:

Pre and Non-pre options are all about how you as an administrator prepare your SAN before you start with SRA setup*. This decision will later influence how the array manipulates with Flash Copy (FC) volumes during Test Recovery.

(*with SRA configuration utility IBMSVCSRAUtil.exe)

Final choice is only up to every sys/storage-admin and his personal preference.

< IBM Flash Copy™ is feature which enables you to create, nearly instantaneously, point-in-time snapshot copies of entire logical volumes or data sets---> Array-Based disk snapshots. >

Preconfigured environments

Environment where Storage Admin creates FC volumes beforehand so during every SRM Test Recovery SRA do not need to create FC volumes “on per Test basis”. Once the FC volumes are created they will be reused every time you run SRM Test Recovery.

- You haveto create equal number of FlashCopy volumes on both the production and the recovery site.

- Only “Copy Operator” user role is needed on SAN management side because with FC preconfigured on the array SRA manipulates only with mirror state and consistency groups (it doesn’t need to create FC volumes during Test Recovery).

- You have to map/present RC volumes together with FC volumes to ESXi servers on recovery site and then RC volumes together with FC volumes to ESXi servers on protected site.

- As usual … the mirrors must be synchronized before any SRM operations can be run.

Pros:

- User role used by SRA for connect to the array do not need to have administrator privilege on SAN management side (for FC volumes creation during Test and delete at Cleanup phase)

Cons:

- This option consumes twice as much space on the array as non-preconfigured environment option.

- Higher administrative overhead for the first configuration.

Non-preconfigured environments

Environment where Storage Admin doesn’t prepare any FC volumes on the array so during SRM Test Recovery, SRA (through SAN Administrator user role) creates and attach all the necessary FC volumes to ESXi hosts

(equal to number of Target/Source volumes) which are later discarded by Cleanup operation after Test Recovery completes.

- You must specify in IBMSVCSRAUtil tool “Test MDisk Group ID” where the FC volume will be created on demand by SRA during Test Recovery operation.

- MDisk Group is referred as Storage Pool so to the “Test MDisk Group ID” column enter Storage Pool ID where the SRA will create FC volumes during Test Recovery operation. Ensure you have enough space in that pool for the FC volumes!

Properties view of Storage pool:

Pros:

- Although you need sufficient space (equal to number and size of target/source volumes) inside the storage pools in the time of Test recovery this space its not consumed permanently because FC volumes are detached (from hosts) and deleted

(on the array) at the end of the Test (Cleanup phase).

Cons:

- User role used by SRA to connect to the array must have administrator privilege on the array to successfully create/delete FC volumes in the specified storage pools.

It’s not the best option from the security perspective and can prevent admins from using this option.

Note:

IBM Storwize v7000 User Guide stated that “Preconfigured” option doesn’t not attach or detach the volumes to or from the host ... empirically ... both SRA options performs detach/attach operation on volumes during test and recovery operations.

Setup steps with Non-preconfigured... SRA option

I will not dig too much into some minor setup details this should be more fast track guide with some tips how to successfully pass-through the installation and setup process.

Following steps assumes that you have already prepared and configured your SAN see “Preparing the SRA environment” section in Storwize Family Replication Adapter 2.3.0 User Guide

Same applies for SRM installation. Do all the steps listed below on both Protected and Recovery site SRM servers.

Note:

From my previous experience with Hitachi arrays and their CCInterface with Command device (as RDM disk) I must say that IBM SRA setup is much easier and straightforward in so far as at the end I thought I must miss something ...

This is mainly due to the type of the communication layer between SRA and the Array. IBM SRA utilize and primarily depends on network connection to the SAN Controller Management in opposite to SAN fabric (via RDM disk) like Hitachi etc.

So from that point I hope that no one will struggle with consecutive steps.

STEPS:

1. Download SRA (use SRA for Storwize Family not the one for SVC):

https://my.vmware.com/group/vmware/details?downloadGroup=SRM512&productId=291#product_downloads`

[vCenter/SRM Server]

2. Install SRA (verify the installer points to SRM installation directory)

3. Check is SRA is successfully installed (if you don't see installed SRA click on Rescan SRAs)

[Storwize Unified management GUI] https://<SANmanagementIP>/gui#home-start

4. Create user for SRA and assign it to Copy Operator Group

[vCenter/SRM Server]

5. In IBM SRA installation folder run SRA utility

6. To configure SRA double-click the IBMSVCSRAUtil.exe to open the IBM configuration utility.

- Leave Pre-Configured Env. checkbox blank

- Chose desired volume type

- Type storage pool ID where the SRA will create FlashCopy volumes.

- Ensure there is sufficient space.

- Test MDisk Group ID and Volume Type settings could differ between sites

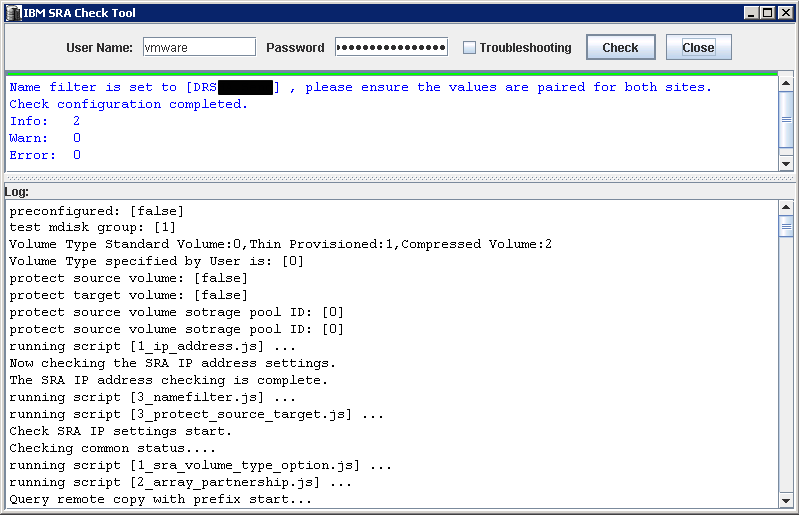

To determine if your SAN configuration is correct you can optionally run SRA configuration Check („Check Configuration“ button)

If everything is configured correctly the output should look similar to this one:

[vSphere client SRM config through plugin]

7. In Array Managers view click Add Array Manager and chose custom display name for the Array Manager

8. Enter Array manager Information

- Name Filter – if you leave it blank (under Array Manager Devices tab) you will see all the replicated volumes discovered on the

array storage pool … for quick orientation it’s much better to type replicated volume name/s here…

On both sites type the names of all local replicated volumes (comma separated) you want to see in the list.

- User name and Password – for user created in step #4

9. Enable the pair on the Array Pairs tab (You can do it either form protected or recovery SRM site plugin just once.)

You should see then an enabled Pair:

Verify filtered device pairs and their replication direction:

---END---

NOTES and TIPS:

10. Just a picture from the Array how the FlashCopy volume (used by and created during SRM Test Recovery operation) “looks like” on the back-end in the defined storage pool.

FC volume is given automatically assigned name with prefix “vdisk”…followed by iťs number.

11. If the user account configured in step #8 doesn't have proper privileges for creating FC volumes on the array your Test Recovery will end up with the following error:

12. Optionally if you encounter a problem where the host (after SRA/SRM initiated rescan) doesn't see recovered volumes and corresponding VMs … just because in time of HBA rescan

the replicated devices was not ready yet…you can delay HBA rescans via Advanced SRM parameter “storageProvider.hostRescanDelaySec”:

13. If you do not want from SRM to leave snap-xxx prefix in names of recovered datastores you can force SRM to remove this auto-generated prefix by checking the box of “storageProvider.fixRecoveredDatastoreNames”:

14. In cases of failed Recovery due to VMware Tools Timeout you can configure SRM not to wait for VMware Tools to start in the recovered VMs. (That’s useful if you have (intentionally) some VMs without VM Tools installed)

The option is Recovery.powerOnTimeoutand you must set this value to 0.

Storwize v7000 IBM sources:

SRA and vCenter server plugin (IBM Storage Management Console for VMware vCenter) download:

http://www-933.ibm.com/support/fixcentral/

Storwize v7000 microcode download and microcode compatibility tables:

http://www-01.ibm.com/support/docview.wss?&uid=ssg1S1003705

http://www-01.ibm.com/support/docview.wss?uid=ssg1S4001306

IBM System Storage SAN Volume Controller and Storwize V7000 Best Practices and Performance Guidelines:

http://www.redbooks.ibm.com/abstracts/SG247521.html?Open

IBM SAN Solution Design Best Practices for VMware vSphere ESXi:

http://www.redbooks.ibm.com/abstracts/sg248158.html?Open

VMware vSphere best practices for IBM SAN Volume Controller and IBM Storwize family:

https://www-304.ibm.com/partnerworld/wps/servlet/download/

IBM Storwize Family Storage Replication Adapter documentation webpage:

http://pic.dhe.ibm.com/infocenter/strhosts/ic/index.jsp?topic=%2Fcom.ibm.help.strghosts.doc%2FSVC_SR...

Storvize v7000 VMware sources:

KB articles:

Using VMware vCenter Site Recovery Manager 5.x with IBM Storwize V7000 (2076) 6.4 (2013231)

http://kb.vmware.com/kb/2013231

Slow performance during Storage vMotion, Clone, and Snapshot consolidation operations in various storage systems (2007723)

http://kb.vmware.com/kb/2007723

IBM Storwize v7000 VAAI Support:

http://www.vmware.com/resources/compatibility/

IBM Storwize v7000 Supports:

Block Zero (Zero Blocks/Write Same), Full Copy (Clone Blocks/Full Copy/XCOPY), HW Assisted Locking (Atomic Test & Set (ATS)

IBM Storwize v7000 Doesn’t support:

Thin Provisioning VAAI API family

(TP stun AKA “Out of Space Behavior”, TP Space Threshold Warning AKA „Quota Exceeded“ and Dead space reclamation / Thin Provisioning Block Space Reclamation AKA “UNMAP”)

How to verify that VAAI is working properly (from SAN perspective)

https://www.ibm.com/developerworks/community/blogs/anthonyv/entry/upgrading_to_esxi_5_0_how_to_confi...

Disclaimer:

You use this proven practice at your discretion. Author do not guarantee any results from the use of this proven practice. This proven practice is provided on an as-is basis and is for demonstration purposes only.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Very nice, I wish I had this about 3 months ago ![]() . One thing I missed, which is sort of in an odd place in the documentation (IBM admitted to me it wasn't clearly spelled out) is in the non-preconfigured environment you do not attach the mirrored datastores at the recovery site. I made that mistake and when I ran a test it bombed on step 8 because it couldn't find the storage. Once I pulled that away it worked as advertised. Thanks for this detailed procedure!

. One thing I missed, which is sort of in an odd place in the documentation (IBM admitted to me it wasn't clearly spelled out) is in the non-preconfigured environment you do not attach the mirrored datastores at the recovery site. I made that mistake and when I ran a test it bombed on step 8 because it couldn't find the storage. Once I pulled that away it worked as advertised. Thanks for this detailed procedure!

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

![]() thanks for your feedback ...

thanks for your feedback ...