- VMware Technology Network

- :

- Cloud & SDDC

- :

- ESXi

- :

- ESXi Discussions

- :

- Re: New to Vmware : Confused between relation of E...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: New to Vmware : Confused between relation of ESX/vSphere/Vcenter

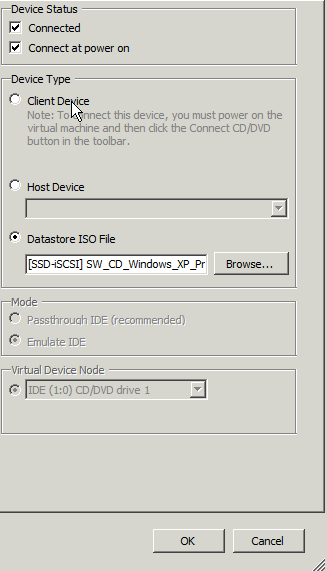

Did you check the connect at power on box (not checked by default)?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You can do a bit of over provisioning. Might not get any quicker but you can do it.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

hhhm lets see .. else i will drop plan to upgrade ..

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Does they use software scsi adapter often in real for esxi to SAN connectivity in real life ? or often its H/w HBA cards ? what is your observation

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

For iSCSI, in all my deployments I use the software adapter, I find that a hardware adapter with offload doesn't provide that much advantages over the software for the extra amount of money it costs.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey a question on linux DM multipathing, will i get the muktipathing with two nic cards in for iscsi lun ? Wondering if that is for HBAs only with 2 ports or 2 HBAs.... sounds silly ha ![]()

here is the conf

[root@kiran ~]# multipath -ll

Dec 03 14:10:23 | multipath.conf line 61, invalid keyword: path_checker

yellow (241e818a91a93124a) dm-0 ROCKET,IMAGEFILE

size=99G features='1 queue_if_no_path' hwhandler='0' wp=rw

`-+- policy='round-robin 0' prio=1 status=active

`- 3:0:0:0 sdb 8:16 active ready running

[root@kiran ~]#

[root@kiran ~]#

[root@kiran ~]# cat /etc/multipath.conf |grep -v "#"

defaults {

user_friendly_names yes

}

blacklist {

wwid 26353900f02796769

devnode "^(ram|raw|loop|fd|md|dm-|sr|scd|st)[0-9]*"

devnode "^hd[a-z]"

}

multipaths {

multipath {

wwid 241e818a91a93124a

alias yellow

path_grouping_policy multibus

path_checker readsector0

path_selector "round-robin 0"

failback manual

rr_weight priorities

no_path_retry 5

}

}

[root@kiran ~]#

===

I have 2 nic cards

[root@kiran ~]# ifconfig

eth0 Link encap:Ethernet HWaddr 00:0C:29:10:76:F4

inet addr:192.168.1.131 Bcast:192.168.1.255 Mask:255.255.255.0

inet6 addr: fe80::20c:29ff:fe10:76f4/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:1336687 errors:0 dropped:0 overruns:0 frame:0

TX packets:515363 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:1927244977 (1.7 GiB) TX bytes:35823630 (34.1 MiB)

Interrupt:19 Base address:0x2024

eth1 Link encap:Ethernet HWaddr 00:50:56:34:00:1C

inet addr:192.168.1.171 Bcast:192.168.1.255 Mask:255.255.255.0

inet6 addr: fe80::250:56ff:fe34:1c/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:3556 errors:0 dropped:0 overruns:0 frame:0

TX packets:829 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:469908 (458.8 KiB) TX bytes:79297 (77.4 KiB)

Interrupt:19 Base address:0x20a4

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:16436 Metric:1

RX packets:31 errors:0 dropped:0 overruns:0 frame:0

TX packets:31 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:2436 (2.3 KiB) TX bytes:2436 (2.3 KiB)

[root@kiran ~]#

Thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey Tom,

Can u tell me if its ok to have a exsi cluster of 3 nodes with ESX os in Local disk of the dell/Sun servers with say raid 0 for local disk & all the VMs on the netapps NFS storage ?

thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

That's OK. Once ESXi is fully booted, (almost) everything will run from memory. Even better would be (think green IT ![]() ) to use an 8GB USB stick for the ESXi OS. If you pick a decent one, the boot process of ESXi will be faster too.

) to use an 8GB USB stick for the ESXi OS. If you pick a decent one, the boot process of ESXi will be faster too.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey,

re-checking, does that nfs share is ok for OS ? Coz i guess that is NFS & not vmfs ...

Thanks,

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

NFS is also supported to run guests. It handels some things differently than VMFS but it is definitely possible. Many people say that NFS isn't a decent storage filesystem for vSphere, but NetApp disagrees ![]() I also think that it's a good solution, however I still prefer VMFS over NFS. But in your case, it is be possible and using NFS should not hold you back.

I also think that it's a good solution, however I still prefer VMFS over NFS. But in your case, it is be possible and using NFS should not hold you back.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

cool .. thanks for the info & sharing your ideas ![]()

- « Previous

- Next »