- VMware Technology Network

- :

- Cloud & SDDC

- :

- ESXi

- :

- ESXi Discussions

- :

- Re: Opinion on my design

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Opinion on my design

Hello everyone,

I've been tasked to spearhead P2V migration for a smb. This including procure hardware, vsphere design, deployment and going live.

I have these :

2 SANs (Dell 3820i) with 2 Cotrollers and 2x10Gb iSCSI on each Controller.

3 Servers (Dell R730) with 6NICs (2x10Gb + 2x1Gb on LOM) + 2x10Gb on expansion Card)

1 Cisco Switch NX-3064T with 48 Ethernet ports.

Currently, we have 192.168.9,*/24 as a Production Network (On Physical Network), So I left this network intact on my virtual design.

Our vSphere license doesn't allow us to use vDS.

The pic of my Design is as follow:

What's is your opinion of my design? do you find it adequate? or Do you have any suggestion for improvement?

Thank you

Dan

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You do not have a license for NIOC so I would prefer to use 2 NICs for first part of the traffic and 2 NICs for second part

First pair: Prod, Devel, Mgmt traffic

Second pair: vMotion, iSCSI, FT

The mix depends on your bandwidth requirements for each of those.

What I see as a big problem here is a single switch. You have nice clustered environment, dual controller storage but everything connected to single switch?

Also I dont know what is the difference between green and red line - like primary, standby link? I typically go with round robin based on virtual port ID.

Martin

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I agree with Martin , you need minimum 2 switches for redundancy.

About the rest , i think it's good topology .

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I would recommend you to create at least two standard vSwitches:

vSwitch0

2x10Gb uplinks (Management, VM traffic, VMotion)

Assign as many port groups as you need here. But I would recommend to configure vmnic1 as Active and vmnic0 as Standby for VMotion port group. And vmnic0 Active, vmnic1 Standby for Management and VM port groups. That way you will make sure that VMotion traffic is separated and does not affect VMs and at the same time keep all port groups redundant at the network level.

vSwitch1

2x10Gb uplinks (iSCSI)

It's highly recommended to keep storage traffic separate from all other types of traffic. You will need two VMkernel ports here to make sure you utilize both 10Gb uplinks. Each VMkernel port group has to have one link as Active and the other one as Unused. If you are using one iSCSI subnet, make sure to use port binding.

And NO link aggregation. Standard vSwitches do not support port channeling. Trunking is perfectly fine, though.

Hope that makes sense.

If you found my answers helpful please consider marking them as helpful or correct.

VCIX-DCV, VCIX-NV, VCAP-CMA | vExpert '16, '17, '18

Blog: http://niktips.wordpress.com | Twitter: @nick_andreev_au

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks your for your opinion. That sure is my consideration.

My proposal was to use 2 switches for redundancy, but the team leader decided of using just one.

You are correct on that red and green lines. The red line is the primary and the green is the standby.

Dan

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

My proposal was to use 2 switches for redundancy, but the team leader decided of using just one.

A ballsy move by the team leader 😉

Make sure to write down your proposal. You don't want to deal with it when a little failing cisco switch brings down the whole new shiny infrastrcture 😉

For the design question I agree with Nick's comments.

Tim

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for suggesting 2 vSwitches in the design. That was actually my first design.

what do you mean by separating iscsi traffic from other traffics? In my design, iSCSI traffic is already isolated by using vlan 50.

Dan

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You want to seperate the iSCSI traffic not only logicaly, but physically. Since you don't have network I/O control due to your vSphere license, you can not manage QoS for your traffic.

So the iSCSI traffic could use 100% of your bandwidth, leaving none to your VMs if they used the same link.

Forgive me if you already planned to use a dedicated link for iSCSI. I don't really get all the lines in your diagram ![]()

Tim

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

In my design, vmnic5 is dedicated for iSCSI traffic. If another vmnic goes down, then traffic can be routed to use vmnic5 or other vmnics as failover.

Dan

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I mostly echo what the other guys are saying. Keep iSCSI traffic on its own set of physical NICs on its own vSwitch.

The rest of the traffic can be segmented out via VLANs on another vSwitch.

Make sure you voice and document EXTREME concern about the decision to use one physical switch only. The reality is that switch will eventually go down when its not supposed to bringing the entire vSphere environment with it. One day someone is going to catch heat for that and let it be known now that you did not recommend that design choice. Hopefully that way it wont be a resume generating event for you but rather someone else!

Good luck

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If you are using only one NIC for iSCSI and you have a cable failure, then all iSCSI session will have to fail over to a standby NIC, which can be potentially disruptive. It's common to use two NICs for iSCSi and configure multipathing.

There are also two approaches to set up iSCSI multipathing. You will need two VMkernel ports on either one subnet or two subnets. If you decide to use one subnet, you will need to configure port binding in software iSCSI adapter properties. Otherwise hypervisor will be using only the first available VMkernel port and won't benefit form having two uplinks.

Also make sure to use one port as Active and the other one as Standby on each of the iSCSI VMkernel port groups or multipathing won't work properly.

Hope that helps.

If you found my answers helpful please consider marking them as helpful or correct.

VCIX-DCV, VCIX-NV, VCAP-CMA | vExpert '16, '17, '18

Blog: http://niktips.wordpress.com | Twitter: @nick_andreev_au

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am considering using vmnic5 exclusively for iSCSI traffic. Taking out all fail over links connected to vmnic5. I also am considering 2 NICs for iSCSI as well. I have't made my mind.

As of now, I am leaning toward the former. Like this :

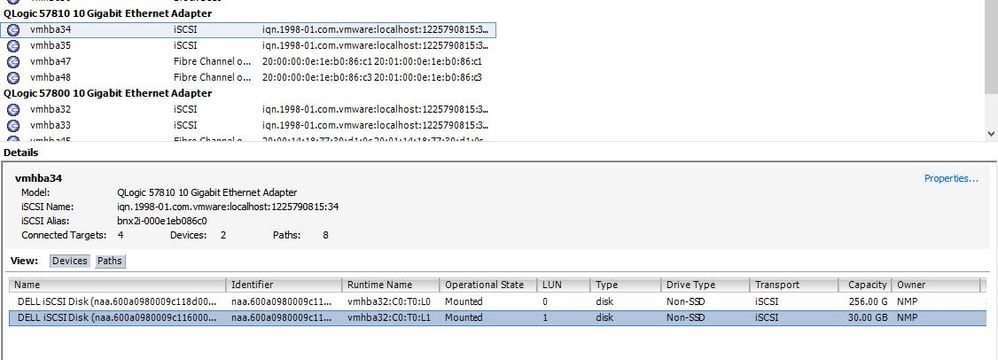

Currently, I am running 4 vmkernel ports for 1 SAN utilizing 4 HBAs. Port binding is enabled on all of those vmkernel ports.

Esxi only allows me to set one port as Active and the other as Unused on all those iSCSI vmkernal ports, unless I use Software iSCSI, iirc.

Bellow is screeshot of my newly created 30GB Datastore:

and Manage Path window:

I am still tinkering with the configuration and settings. Hopefully end up with the best configs and settings.

Opinion and Suggestion are welcome.

Thanks

Dan