- VMware Technology Network

- :

- Cloud & SDDC

- :

- ESXi

- :

- ESXi Discussions

- :

- Re: NFS 4.1 export mounts as read-only (RECLAIM_CO...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Seems to be the case that when mounting NFS 4.1 using Windows Server 2012 R2 as the endpoint people are getting the following error:

2015-08-15T13:55:59.460Z cpu0:34075 opID=e5c595d5)NFS41: NFS41_VSIMountSet:402: Mount server: 172.20.10.14, port: 2049, path: normal, label: nfs, security: 1 user: , options: <none>

2015-08-15T13:55:59.460Z cpu0:34075 opID=e5c595d5)StorageApdHandler: 982: APD Handle Created with lock[StorageApd-0x43059cff25c0]

2015-08-15T13:55:59.461Z cpu1:33347)NFS41: NFS41ProcessClusterProbeResult:3865: Reclaiming state, cluster 0x43059cff3780 [4]

2015-08-15T13:55:59.462Z cpu0:34075 opID=e5c595d5)NFS41: NFS41FSCompleteMount:3582: Lease time: 120

2015-08-15T13:55:59.462Z cpu0:34075 opID=e5c595d5)NFS41: NFS41FSCompleteMount:3583: Max read xfer size: 0x3fc00

2015-08-15T13:55:59.462Z cpu0:34075 opID=e5c595d5)NFS41: NFS41FSCompleteMount:3584: Max write xfer size: 0x3fc00

2015-08-15T13:55:59.462Z cpu0:34075 opID=e5c595d5)NFS41: NFS41FSCompleteMount:3585: Max file size: 0xfffffff0000

2015-08-15T13:55:59.462Z cpu0:34075 opID=e5c595d5)NFS41: NFS41FSCompleteMount:3586: Max file name: 255

2015-08-15T13:55:59.462Z cpu0:34075 opID=e5c595d5)WARNING: NFS41: NFS41FSCompleteMount:3591: The max file name size (255) of file system is larger than that of FSS (128)

2015-08-15T13:55:59.463Z cpu0:34075 opID=e5c595d5)WARNING: NFS41: NFS41FSCompleteMount:3601: RECLAIM_COMPLETE FS failed: Failure; forcing read-only operation

2015-08-15T13:55:59.463Z cpu0:34075 opID=e5c595d5)NFS41: NFS41FSAPDNotify:5651: Restored connection to the server 172.20.10.14 mount point nfs, mounted as 907c6b39-acb49d79-0000-000000000000 ("normal")

2015-08-15T13:55:59.463Z cpu0:34075 opID=e5c595d5)NFS41: NFS41_VSIMountSet:414: nfs mounted successfully

A snippet from the NFS 4.1 RFC has this to say about RECLAIM_COMPLETE (RFC 5661 - NFS Version 4 Minor Version 1 - Page 567)

Whenever a client establishes a new client ID and before it does the

first non-reclaim operation that obtains a lock, it MUST send a

RECLAIM_COMPLETE with rca_one_fs set to FALSE, even if there are no

locks to reclaim. If non-reclaim locking operations are done before

the RECLAIM_COMPLETE, an NFS4ERR_GRACE error will be returned.

Similarly, when the client accesses a file system on a new server,

before it sends the first non-reclaim operation that obtains a lock

on this new server, it MUST send a RECLAIM_COMPLETE with rca_one_fs

set to TRUE and current filehandle within that file system, even if

there are no locks to reclaim. If non-reclaim locking operations are

done on that file system before the RECLAIM_COMPLETE, an

NFS4ERR_GRACE error will be returned.

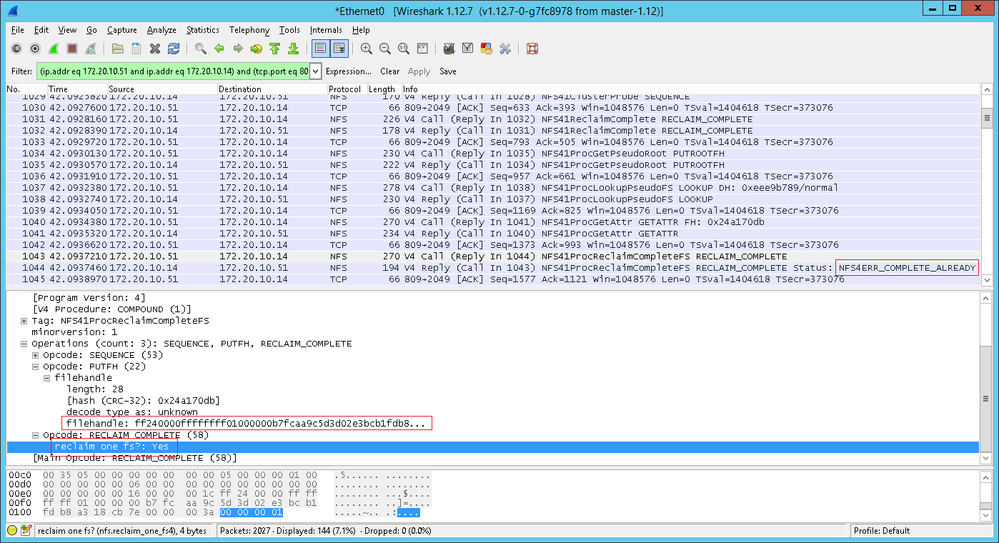

I ran a capture during the mount which shows that the ESXi host sent a RECLAIM_COMPLETE with rca_one_fs set to FALSE, later it sends another RECLAIM_COMPLETE with rca_one_fs set to TRUE and a filehandle specified. This looks like the correct behavior.

Last of all, back in the RFC is this little tidbit:

RECLAIM_COMPLETE should only be done once for each server instance or

occasion of the transition of a file system. If it is done a second

time, the error NFS4ERR_COMPLETE_ALREADY will result. Note that

because of the session feature's retry protection, retries of

COMPOUND requests containing RECLAIM_COMPLETE operation will not

result in this error.

The screenshots also appear to show that both RECLAIM_COMPLETE operations are COMPOUND, so it seems to me that ESXi is doing what it is supposed to do, but the NFS server is sending back an error when it shouldn't which is causing the host to fall back to read-only access mode.

Any input from the wider community? Happy to be stood corrected! ![]()

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I filed a support request for VMware to look into this issue. They are aware of the problem and there is currently no solution available. They said that they are targeting Windows 2016 for delivering a solution since it will require a major code change. It looks like we are out of luck for now.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

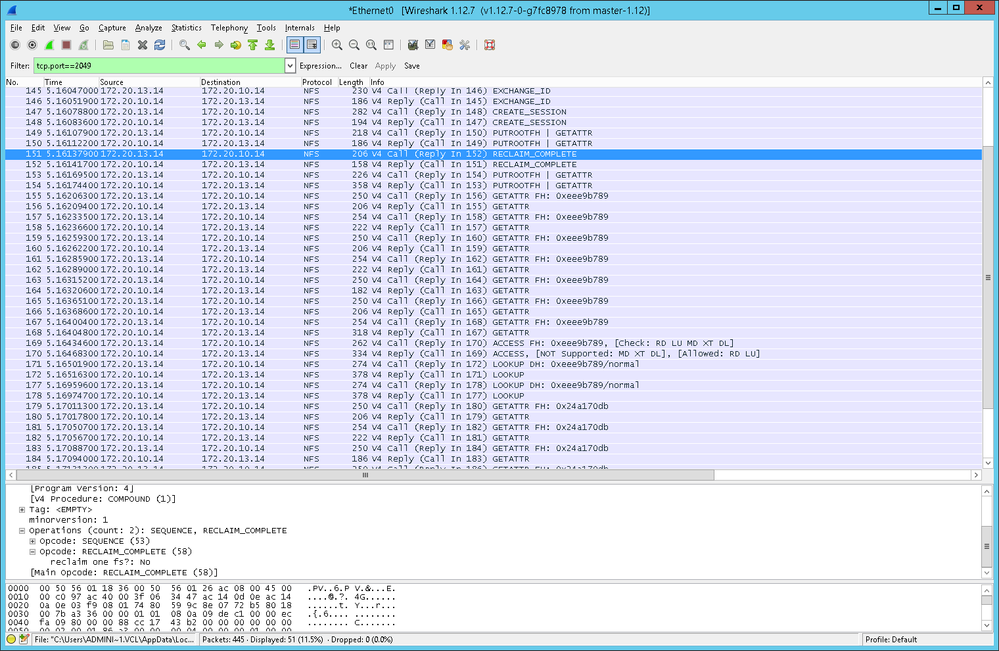

This is the capture from a Linux client mounting the same volume with R/W access. The Linux client only sends a single RECLAIM_COMPLETE operation. Perhaps ESXi is not doing what it should do after all?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am seeing a similar thing trying to create a new datastore by connecting to Windows Server 2012 R2 via NFS v4.1. The datastore ends up read-only. But if I use NFS 3 to connect, no problem.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am having the same issue. When I mount a datastore to a location using NFS 4.1, without Kerberos, and the access mode is read-write, the share mounts but as read-only. In vSphere when I check Datastores it shows "Datastore (read only)". My datastore NFS location is Windows Server 2012. Also, NFS 4.1 doesn't work at all on Windows Server 2008 R2. Is 4.1 not supported on 2008 R2?

Here is what the vmkernel.log shows:

2015-11-30T21:24:08.677Z cpu1:34017 opID=8fce142c)NFS41: NFS41_VSIMountSet:402: Mount server: VSVR12-01, port: 2049, path: /VMs, label: Datastore, security: 1 user: , options: <none>

2015-11-30T21:24:08.677Z cpu1:34017 opID=8fce142c)StorageApdHandler: 982: APD Handle Created with lock[StorageApd-0x4306a72bc140]

2015-11-30T21:24:08.680Z cpu1:33354)NFS41: NFS41ProcessClusterProbeResult:3865: Reclaiming state, cluster 0x4306a72bd2c0 [26]

2015-11-30T21:24:08.691Z cpu1:34017 opID=8fce142c)NFS41: NFS41FSCompleteMount:3582: Lease time: 120

2015-11-30T21:24:08.691Z cpu1:34017 opID=8fce142c)NFS41: NFS41FSCompleteMount:3583: Max read xfer size: 0x3fc00

2015-11-30T21:24:08.691Z cpu1:34017 opID=8fce142c)NFS41: NFS41FSCompleteMount:3584: Max write xfer size: 0x3fc00

2015-11-30T21:24:08.691Z cpu1:34017 opID=8fce142c)NFS41: NFS41FSCompleteMount:3585: Max file size: 0xffffffff000

2015-11-30T21:24:08.691Z cpu1:34017 opID=8fce142c)NFS41: NFS41FSCompleteMount:3586: Max file name: 255

2015-11-30T21:24:08.691Z cpu1:34017 opID=8fce142c)WARNING: NFS41: NFS41FSCompleteMount:3591: The max file name size (255) of file system is larger than that of FSS (128)

2015-11-30T21:24:08.692Z cpu1:34017 opID=8fce142c)WARNING: NFS41: NFS41FSCompleteMount:3601: RECLAIM_COMPLETE FS failed: Failure; forcing read-only operation

2015-11-30T21:24:08.693Z cpu1:34017 opID=8fce142c)NFS41: NFS41FSAPDNotify:5651: Restored connection to the server VSVR12-01 mount point Datastore, mounted as 2bf37a9c-ba7ba30d-0000-00000000

2015-11-30T21:24:08.693Z cpu1:34017 opID=8fce142c)NFS41: NFS41_VSIMountSet:414: Datastore mounted successfully

2015-11-30T21:24:15.677Z cpu1:423423)WARNING: LinuxSignal: 181: Signal 20 has unimplemented cartel-level semantics (sending anyway)

- vmkernel.log 4692/4692 100%

Any help would be appreciated!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi nirvy,

Did you ever find out how to solve this?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I did not unfortunately, no. ![]()

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

OK, here is an update to my problem:

In the vSphere Web Client at the HOME screen I click "Hosts and Clusters," choose my Host on the left side, then on the right click "Related Objects" and then choose the "Datastores" tab. The datastore shows (read only) after the datastore name. And if I create a new datastore on this page it will show (read only). However, if I choose "Storage" from the HOME page and my DataCenter is highlighted on the left side, "Related Objects" is chosen on the right and the "Datastores" tab is selected below. Now the (read only) text next to the datatstore name is gone. Also when I create a datastore on this page I do not get the (read only) text next to the datastore name.

Any ideas why this might be the case?

Thank you in advance!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

To add one more note: I am logged into the vServer computer with domain admin access and then logging into vSphere Web Client with administrator@vsphere.local.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It looks like the web client does not always display the read only flag. I can mount a datastore in the web client and it looks fine until I open the vSphere client and it will show it as read only. This is problematic because the vSphere client will mount NFS shares using version 3 only so you have to use the web client to setup a share using NFS 4.1 but you cannot see it's true status until you check on it with the vSphere client. I wish they would just have all functionality in the client.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Just FYI: I have only been using the vSphere Web Client and experiencing these issues within the vSphere Web client. I do not use the VMWare Client.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

NFS 4.1 exports is not supported by the vsphere client, only the web interface. If you try to mount them using the vsphere client, it will not be done properly

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I filed a support request for VMware to look into this issue. They are aware of the problem and there is currently no solution available. They said that they are targeting Windows 2016 for delivering a solution since it will require a major code change. It looks like we are out of luck for now.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for letting us know!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Any update to this by chance, or any workarounds? We are experiencing this exact issue:

2015-12-18T18:12:59.004Z cpu12:36158 opID=a28601f0)WARNING: NFS41: NFS41FSCompleteMount:3601: RECLAIM_COMPLETE FS failed: Failure; forcing read-only operation

NFSv3 works, but is horrendously slow (7MBps using dd or Crystal DiskMark). If I mount the NFS 4.1 share on CentOS Linux, I'm able to mount successfully, and get upwards of 2GBps write (using dd) but with vSphere 6, I have the same issue as the OP.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have exactly the same problem when mounting NFS share from FreeBSD 11. Identical error message.

I'm using latest vsphere 6.5, and this problem still exisits. Hopefully someone from VMware can address this problem soon.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am using esxi 6.0u3 and Windows 2012R2 Storage Server and the issue still exists. That means that supoprt for NFS 4.1 is broken in esxi.

NFS v3 works OK.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am asking my VMware rep about this to see what they say... RECLAIM_COMPLETE bug...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi, is there any update on this problem?

I am trying to mount a NFS 4.1 export from our FreeBSD server but regardless what I tried so far it always mounts as read-only.

Thanks,

Andi

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can confirm this issue was also confirming:

VCS 6.5

ESXi 6.5

QNAP NFS 4.1 (Fresh volume)

No Kerberos enabled

Although, I did note that if I linked up a NFS 3 volume and NFS 4 volume at the same time. VMotioned using NFSV3 volume, then VMotion again from NFS3 volume to NFS4 volume; after this point no further issues occured even with Vmotion for other VM's. Thus assuming a possible permission issue at the root level...not going to test my luck and leave this in place.

If I was to copy the VM back to a local store, remove all NFS voluumes then reatch the NFSV4 volume then the issue reoccurs.

Steven

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Does the error above that make any sense or create the issue your looking to resolve?

WARNING: NFS41: NFS41FSCompleteMount:3591: The max file name size (255) of file system is larger than that of FSS (128)

I have FreeNAS 11.2 u1 and I am trying to setup a NFS 4.1 volume for VMware ESXi 6.0 or 6.7 and on the 6.5 I have a vCenter server and I am still not having any luck. Command line mount points work but the data store is read only.

I want to use session trunking to use multiple IP addresses and see if VMware will round robin those connections like iSCSI or if guest sessions are tied to a single IP and I have to have a LOT of VM's to load up a 2 or 4 port NIC.

Thanks,

Joe