- VMware Technology Network

- :

- Cloud & SDDC

- :

- ESXi

- :

- ESXi Discussions

- :

- ESXi 6.7u3 PSoD Reoccurrences (Exception 13) - wha...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

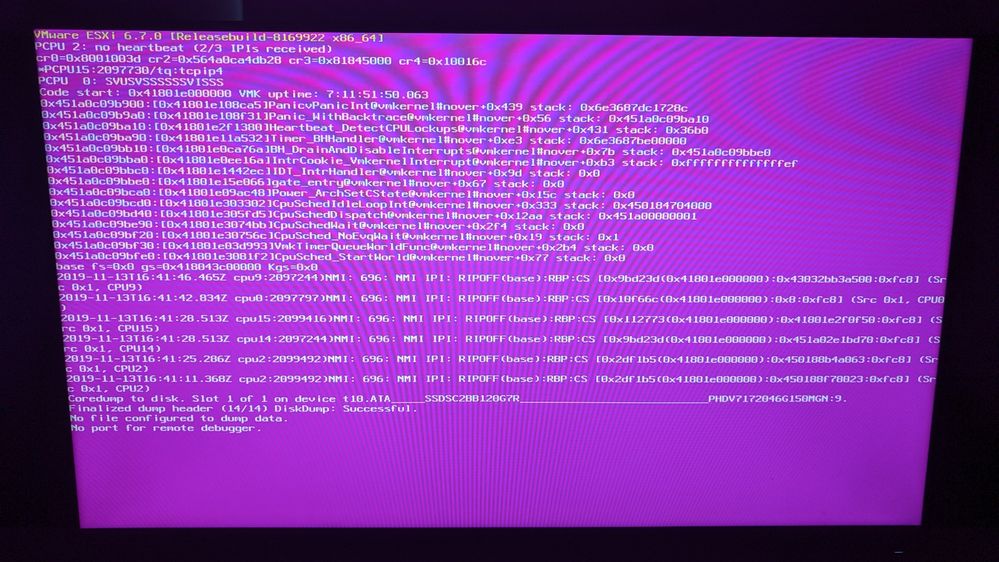

ESXi 6.7u3 PSoD Reoccurrences (Exception 13) - what could be the root cause

I have been runnng ESXi on a machine for about a year now, recently over the past few months, I have been experiencing more and more PSoD.

Without the slightest clue as to specifically what is the cause, I did grab the coredumps according to the support website, however, for a home user, to spend $300 on a support contract is a stiff price, System wise, nothing has changed on the system.

System Spec Wise:

1TB Samsung NVME Datastore

120 GB Intel SSD ESXi installation

AMD Ryzen 7 1700 Eight-Core Processor

64 GB ECC Samsung Memory

LSI 2008 HBA (pass through to guest)

6x 6TB Western Digital HDDs (pass through to guest)

GT710 GPU

X470 Master SLI/ac Motherboard

As the file is too large to attach here: https://drive.google.com/open?id=1mkEnaov-kT1PFJojx5U1-HftmJWweCvt

Above are the two latest dumps that have occurred on the system.

Is there some specific troubleshooting I can do to narrow down the steps related to these dumps, as according to the support page (VMware Knowledge Base ) there is not any troubeshooting steps besides reaching out to VMeware Suport and shelling out $300?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can you paste screenshot of that PSOD?

PSOD is usually connected with firmware/drivers of I/O devices like Storage HBA, Network interfaces etc.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

We have Dell PE R730 servers in a cluster. We upgraded to Vmware 6.7U3 and got PSOD on three servers. I opened a case with VMware support and tech suspects the gfle3 driver is causing the PSOD and asked me to engage with Dell whether they have any latest firmware which fixes the issue. The tech suggested reverting to the bnx2 driver might help. He was not sure whether bnx2 will help.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

From newest to oldest, here are the most recent three PSoD I have received on the system all within the past month.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re-direct the output of the below command to a text file and attach it to the thread:

[root@esxi-1:/tmp] esxcfg-info -a > /tmp/info.txt

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

When this PSOD happened? I mean after which situation? Is that happened exactly after booting? After starting a service? After starting a specific VM? After rescanning on a specific Adapter?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

There isn't anything abnormal being done when the PSoD occurs - generally it happens when I am asleep or not actively using esxi2 (there are active VMs running, but I am not interacting with the machine).

It could be specifically related to disk activity, but not sure how to determine that.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Is there any activity in the midnight duration? like scheduled backup jobs or something that cause high I/O on storage more than normal IOPS ... Do you have RDM vDisk or any passthrough device in a specific VM?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Nothing abnormal or any "new" activity has occurred since all of thes PSoDs.

I have a passthrough HBA with 6 WD Red 6TB drives passed through to a NAS for ZFS.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can you remove the passthrough temporary for a time duration to check the problem?

Please check the vmware.log files on that VM directory?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

There doesn't seem to be a way to force these PSoDs and unfortunately, I don't know how long it would take for the PSoD to maybe occur if I do remove the passthrough device.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

In addition to the above, most of the other guests (that do not have a pass through) require the guest that has the passthrough device attached. Reason for that is that it is the NAS/ZFS Storage Pool. So if I remove that guest with the device passthrough, there would not be much acrtivity coming from the other guests.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

And it just occurred again (around 30 minutes ago):

2019-11-04T02:17:51.349Z cpu3:2097754)DVFilter: 5963: Checking disconnected filters for timeouts

2019-11-04T02:27:51.355Z cpu15:2097754)DVFilter: 5963: Checking disconnected filters for timeouts

2019-11-04T02:37:51.361Z cpu12:2097754)DVFilter: 5963: Checking disconnected filters for timeouts

2019-11-04T02:47:51.368Z cpu12:2097754)DVFilter: 5963: Checking disconnected filters for timeouts

2019-11-04T02:57:51.374Z cpu9:2097754)DVFilter: 5963: Checking disconnected filters for timeouts

2019-11-04T03:03:01.017Z cpu0:2097656)WARNING: ScsiDeviceIO: 1564: Device t10.NVMe____Samsung_SSD_970_PRO_1TB_________________EA43B39156382500 performance has deteriorated. I/O latency increased from average value of 246 microseconds to

2019-11-04T03:03:01.017Z cpu0:2097656)WARNING: 5044 microseconds.

2019-11-04T03:07:51.380Z cpu2:2097754)DVFilter: 5963: Checking disconnected filters for timeouts

2019-11-04T03:17:51.386Z cpu8:2097754)DVFilter: 5963: Checking disconnected filters for timeouts

2019-11-04T03:22:22.553Z cpu1:2097656)ScsiDeviceIO: 1530: Device t10.NVMe____Samsung_SSD_970_PRO_1TB_________________EA43B39156382500 performance has improved. I/O latency reduced from 5044 microseconds to 994 microseconds.

2019-11-04T03:27:51.392Z cpu13:2097754)DVFilter: 5963: Checking disconnected filters for timeouts

2019-11-04T03:30:34.980Z cpu13:2097656)ScsiDeviceIO: 1530: Device t10.NVMe____Samsung_SSD_970_PRO_1TB_________________EA43B39156382500 performance has improved. I/O latency reduced from 994 microseconds to 577 microseconds.

2019-11-04T03:37:51.398Z cpu13:2097754)DVFilter: 5963: Checking disconnected filters for timeouts

2019-11-04T03:47:51.405Z cpu9:2097754)DVFilter: 5963: Checking disconnected filters for timeouts

2019-11-04T03:57:51.412Z cpu9:2097754)DVFilter: 5963: Checking disconnected filters for timeouts

2019-11-04T04:07:51.418Z cpu8:2097754)DVFilter: 5963: Checking disconnected filters for timeouts

2019-11-04T04:17:51.424Z cpu10:2097754)DVFilter: 5963: Checking disconnected filters for timeouts

2019-11-04T04:27:51.431Z cpu14:2097754)DVFilter: 5963: Checking disconnected filters for timeouts

2019-11-04T04:37:51.437Z cpu3:2097754)DVFilter: 5963: Checking disconnected filters for timeouts

2019-11-04T04:47:51.443Z cpu8:2097754)DVFilter: 5963: Checking disconnected filters for timeouts

(repeats for 200 lines)

2019-11-06T02:17:52.871Z cpu9:2097754)DVFilter: 5963: Checking disconnected filters for timeouts

2019-11-06T02:27:52.876Z cpu8:2097754)DVFilter: 5963: Checking disconnected filters for timeouts

2019-11-06T02:37:52.881Z cpu1:2097754)DVFilter: 5963: Checking disconnected filters for timeouts

2019-11-06T02:47:52.885Z cpu9:2097754)DVFilter: 5963: Checking disconnected filters for timeouts

2019-11-06T02:57:52.890Z cpu4:2097754)DVFilter: 5963: Checking disconnected filters for timeouts

2019-11-06T03:07:52.895Z cpu4:2097754)DVFilter: 5963: Checking disconnected filters for timeouts

2019-11-06T03:16:45.593Z cpu0:2097414)World: 3015: PRDA 0x418040000000 ss 0x0 ds 0xfd0 es 0xfd0 fs 0xfd0 gs 0xfd0

2019-11-06T03:16:45.593Z cpu0:2097414)World: 3017: TR 0xfd8 GDT 0x450984200000 (0xfe7) IDT 0x418000164000 (0xfff)

2019-11-06T03:16:45.593Z cpu0:2097414)World: 3018: CR0 0x8001003d CR3 0xdd766000 CR4 0x10016c

2019-11-06T03:16:45.598Z cpu0:2097414)Backtrace for current CPU #0, worldID=2097414, fp=0x418040000350

2019-11-06T03:16:45.599Z cpu0:2097414)VMware ESXi 6.7.0 [Releasebuild-14320388 x86_64]

#PF Exception 14 in world 2097414:VSCSIPoll IP 0x451a08323500 addr 0x451a08323500

PTEs:0x100102023;0x101e455063;0x13733e063;0x80000001373a0063;

2019-11-06T03:16:45.600Z cpu0:2097414)cr0=0x8001003d cr2=0x451a08323500 cr3=0xdd766000 cr4=0x10016c

2019-11-06T03:16:45.600Z cpu0:2097414)frame=0x451a0119bd60 ip=0x451a08323500 err=17 rflags=0x10216

2019-11-06T03:16:45.600Z cpu0:2097414)rax=0x3bbb82c2433b9 rbx=0x418040000080 rcx=0x1ad6

2019-11-06T03:16:45.600Z cpu0:2097414)rdx=0x6d4a rbp=0x418040000350 rsi=0x1

2019-11-06T03:16:45.601Z cpu0:2097414)rdi=0x0 r8=0x0 r9=0x7ffc4447d3dbcc46

2019-11-06T03:16:45.601Z cpu0:2097414)r10=0x196 r11=0x0 r12=0x3bb161e9cafc

2019-11-06T03:16:45.601Z cpu0:2097414)r13=0x451a0119be88 r14=0x451a08323500 r15=0x8

2019-11-06T03:16:45.601Z cpu0:2097414)pcpu:0 world:2097414 name:"VSCSIPoll" (S)

2019-11-06T03:16:45.601Z cpu0:2097414)pcpu:1 world:2146103 name:"fdsAIO" (SH)

2019-11-06T03:16:45.601Z cpu0:2097414)pcpu:2 world:2146076 name:"fdsAIO" (SH)

2019-11-06T03:16:45.601Z cpu0:2097414)pcpu:3 world:2100348 name:"vmm1:Windows_10_64bit" (V)

2019-11-06T03:16:45.601Z cpu0:2097414)pcpu:4 world:2146104 name:"fdsAIO" (SH)

2019-11-06T03:16:45.601Z cpu0:2097414)pcpu:5 world:2099789 name:"NetWorld-VM-2099788" (S)

2019-11-06T03:16:45.601Z cpu0:2097414)pcpu:6 world:2100174 name:"vmm5:plex" (V)

2019-11-06T03:16:45.601Z cpu0:2097414)pcpu:7 world:2100167 name:"vmm0:plex" (V)

2019-11-06T03:16:45.601Z cpu0:2097414)pcpu:8 world:2099793 name:"vmm3:nas" (V)

2019-11-06T03:16:45.601Z cpu0:2097414)pcpu:9 world:2100173 name:"vmm4:plex" (V)

2019-11-06T03:16:45.601Z cpu0:2097414)pcpu:10 world:2097175 name:"tlbflushcounttryflush" (S)

2019-11-06T03:16:45.601Z cpu0:2097414)pcpu:11 world:2146071 name:"fdsAIO" (SH)

2019-11-06T03:16:45.601Z cpu0:2097414)pcpu:12 world:2099791 name:"vmm1:nas" (V)

2019-11-06T03:16:45.601Z cpu0:2097414)pcpu:13 world:2146077 name:"fdsAIO" (SH)

2019-11-06T03:16:45.601Z cpu0:2097414)pcpu:14 world:2100345 name:"vmm0:Windows_10_64bit" (V)

2019-11-06T03:16:45.601Z cpu0:2097414)pcpu:15 world:2100180 name:"LSI-2100167:0" (S)

2019-11-06T03:16:45.601Z cpu0:2097414)@BlueScreen: #PF Exception 14 in world 2097414:VSCSIPoll IP 0x451a08323500 addr 0x451a08323500

PTEs:0x100102023;0x101e455063;0x13733e063;0x80000001373a0063;

2019-11-06T03:16:45.601Z cpu0:2097414)Code start: 0x418000000000 VMK uptime: 4:01:28:52.738

2019-11-06T03:16:45.603Z cpu0:2097414)base fs=0x0 gs=0x418040000000 Kgs=0x0

2019-11-06T03:16:45.603Z cpu0:2097414)vmkernel 0x0 .data 0x0 .bss 0x0

2019-11-06T03:16:45.603Z cpu0:2097414)chardevs 0x418000740000 .data 0x417fc0e00000 .bss 0x417fc0e00440

2019-11-06T03:16:45.603Z cpu0:2097414)user 0x418000748000 .data 0x417fc1200000 .bss 0x417fc1210a80

2019-11-06T03:16:45.603Z cpu0:2097414)procfs 0x418000843000 .data 0x417fc1800000 .bss 0x417fc1800240

2019-11-06T03:16:45.603Z cpu0:2097414)lfHelper 0x418000846000 .data 0x417fc1c00000 .bss 0x417fc1c03d00

2019-11-06T03:16:45.603Z cpu0:2097414)vsanapi 0x41800084d000 .data 0x417fc2000000 .bss 0x417fc2003740

2019-11-06T03:16:45.603Z cpu0:2097414)vsanbase 0x418000865000 .data 0x417fc2400000 .bss 0x417fc24154e0

2019-11-06T03:16:45.603Z cpu0:2097414)vprobe 0x418000881000 .data 0x417fc2800000 .bss 0x417fc2811d00

2019-11-06T03:16:45.603Z cpu0:2097414)vmkapi_mgmt 0x4180008cf000 .data 0x417fc2c00000 .bss 0x417fc2c00200

2019-11-06T03:16:45.603Z cpu0:2097414)iodm 0x4180008d5000 .data 0x417fc3000000 .bss 0x417fc3000128

2019-11-06T03:16:45.603Z cpu0:2097414)dma_mapper_iommu 0x4180008da000 .data 0x417fc3400000 .bss 0x417fc3400080

2019-11-06T03:16:45.603Z cpu0:2097414)vmkapi_v2_4_0_0_mgmt_shim 0x4180008de000 .data 0x417fc3800000 .bss 0x417fc38002e8

2019-11-06T03:16:45.603Z cpu0:2097414)vmkapi_v2_3_0_0_mgmt_shim 0x4180008df000 .data 0x417fc3c00000 .bss 0x417fc3c00180

2019-11-06T03:16:45.603Z cpu0:2097414)vmkapi_v2_2_0_0_mgmt_shim 0x4180008e0000 .data 0x417fc4000000 .bss 0x417fc40001a0

2019-11-06T03:16:45.603Z cpu0:2097414)vmkapi_v2_2_0_0_vmkernel_shim 0x4180008e1000 .data 0x417fc4400000 .bss 0x417fc440c9c0

2019-11-06T03:16:45.603Z cpu0:2097414)vmkapi_v2_4_0_0_vmkernel_shim 0x4180008e9000 .data 0x417fc4800000 .bss 0x417fc4812bc0

2019-11-06T03:16:45.603Z cpu0:2097414)vmkapi_v2_3_0_0_vmkernel_shim 0x4180008ef000 .data 0x417fc4c00000 .bss 0x417fc4c0ff20

2019-11-06T03:16:45.603Z cpu0:2097414)vmkbsd 0x4180008f8000 .data 0x417fc5000000 .bss 0x417fc5007100

2019-11-06T03:16:45.603Z cpu0:2097414)vmkusb 0x418000941000 .data 0x417fc5400000 .bss 0x417fc5407a40

2019-11-06T03:16:45.603Z cpu0:2097414)vmw_ahci 0x4180009ba000 .data 0x417fc5800000 .bss 0x417fc58005c0

2019-11-06T03:16:45.603Z cpu0:2097414)igbn 0x4180009db000 .data 0x417fc5c00000 .bss 0x417fc5c00280

2019-11-06T03:16:45.603Z cpu0:2097414)nvme 0x418000a06000 .data 0x417fc6000000 .bss 0x417fc60004e0

2019-11-06T03:16:45.603Z cpu0:2097414)pciPassthru 0x418000a29000 .data 0x417fc6400000 .bss 0x417fc6402f00

2019-11-06T03:16:45.603Z cpu0:2097414)iscsi_trans 0x418000a39000 .data 0x417fc6800000 .bss 0x417fc6801740

2019-11-06T03:16:45.603Z cpu0:2097414)iscsi_trans_compat_shim 0x418000a4d000 .data 0x417fc6c00000 .bss 0x417fc6c01148

2019-11-06T03:16:45.603Z cpu0:2097414)vmkapi_v2_2_0_0_iscsiInc_shim 0x418000a4e000 .data 0x417fc7000000 .bss 0x417fc7000850

2019-11-06T03:16:45.603Z cpu0:2097414)vmkapi_v2_3_0_0_iscsiInc_shim 0x418000a52000 .data 0x417fc7400000 .bss 0x417fc7400850

2019-11-06T03:16:45.603Z cpu0:2097414)etherswitch 0x418000a57000 .data 0x417fc7800000 .bss 0x417fc7818000

2019-11-06T03:16:45.603Z cpu0:2097414)portcfg 0x418000aa6000 .data 0x417fc7c00000 .bss 0x417fc7c16fc0

2019-11-06T03:16:45.603Z cpu0:2097414)vswitch 0x418000ac4000 .data 0x417fc8000000 .bss 0x417fc8000640

2019-11-06T03:16:45.603Z cpu0:2097414)netsched_fifo 0x418000b15000 .data 0x417fc8400000 .bss 0x417fc8400060

2019-11-06T03:16:45.603Z cpu0:2097414)netsched_hclk 0x418000b17000 .data 0x417fc8800000 .bss 0x417fc8803ec0

2019-11-06T03:16:45.603Z cpu0:2097414)netioc 0x418000b27000 .data 0x417fc8c00000 .bss 0x417fc8c000a0

2019-11-06T03:16:45.603Z cpu0:2097414)lb_netqueue_bal 0x418000b2e000 .data 0x417fc9000000 .bss 0x417fc9000388

2019-11-06T03:16:45.603Z cpu0:2097414)dm 0x418000b35000 .data 0x417fc9400000 .bss 0x417fc9400000

2019-11-06T03:16:45.603Z cpu0:2097414)nmp 0x418000b38000 .data 0x417fc9800000 .bss 0x417fc9804630

2019-11-06T03:16:45.603Z cpu0:2097414)hpp 0x418000b6a000 .data 0x417fc9c00000 .bss 0x417fc9c02bf8

2019-11-06T03:16:45.603Z cpu0:2097414)vmw_satp_local 0x418000b74000 .data 0x417fca000000 .bss 0x417fca000020

2019-11-06T03:16:45.603Z cpu0:2097414)vmw_satp_default_aa 0x418000b78000 .data 0x417fca400000 .bss 0x417fca400000

2019-11-06T03:16:45.603Z cpu0:2097414)vmw_psp_lib 0x418000b7a000 .data 0x417fca800000 .bss 0x417fca800290

2019-11-06T03:16:45.603Z cpu0:2097414)vmw_psp_fixed 0x418000b7c000 .data 0x417fcac00000 .bss 0x417fcac00000

2019-11-06T03:16:45.603Z cpu0:2097414)vmw_psp_rr 0x418000b7f000 .data 0x417fcb000000 .bss 0x417fcb000060

2019-11-06T03:16:45.603Z cpu0:2097414)vmw_psp_mru 0x418000b85000 .data 0x417fcb400000 .bss 0x417fcb400000

2019-11-06T03:16:45.603Z cpu0:2097414)vmci 0x418000b87000 .data 0x417fcb800000 .bss 0x417fcb808500

2019-11-06T03:16:45.603Z cpu0:2097414)healthchk 0x418000bb2000 .data 0x417fcbc00000 .bss 0x417fcbc15d20

2019-11-06T03:16:45.603Z cpu0:2097414)teamcheck 0x418000bcd000 .data 0x417fcc000000 .bss 0x417fcc016240

2019-11-06T03:16:45.603Z cpu0:2097414)vlanmtucheck 0x418000be4000 .data 0x417fcc400000 .bss 0x417fcc4162c0

2019-11-06T03:16:45.603Z cpu0:2097414)heartbeat 0x418000c00000 .data 0x417fcc800000 .bss 0x417fcc816140

2019-11-06T03:16:45.603Z cpu0:2097414)shaper 0x418000c19000 .data 0x417fccc00000 .bss 0x417fccc17e90

2019-11-06T03:16:45.603Z cpu0:2097414)lldp 0x418000c33000 .data 0x417fcd000000 .bss 0x417fcd000050

2019-11-06T03:16:45.603Z cpu0:2097414)cdp 0x418000c39000 .data 0x417fcd400000 .bss 0x417fcd417380

2019-11-06T03:16:45.603Z cpu0:2097414)ipfix 0x418000c57000 .data 0x417fcd800000 .bss 0x417fcd8167c0

2019-11-06T03:16:45.603Z cpu0:2097414)tcpip4 0x418000c7a000 .data 0x417fcdc00000 .bss 0x417fcdc18180

2019-11-06T03:16:45.603Z cpu0:2097414)dvsdev 0x418000dd4000 .data 0x417fce000000 .bss 0x417fce000030

2019-11-06T03:16:45.603Z cpu0:2097414)dvfilter 0x418000dd7000 .data 0x417fce400000 .bss 0x417fce400ac0

2019-11-06T03:16:45.603Z cpu0:2097414)lacp 0x418000dfc000 .data 0x417fce800000 .bss 0x417fce800140

2019-11-06T03:16:45.603Z cpu0:2097414)drbg 0x418000e0b000 .data 0x417fcec00000 .bss 0x417fcec01120

2019-11-06T03:16:45.603Z cpu0:2097414)vmkapi_v2_4_0_0_dvfilter_shim 0x418000e2f000 .data 0x417fcf000000 .bss 0x417fcf0009e8

2019-11-06T03:16:45.603Z cpu0:2097414)vmkapi_v2_2_0_0_dvfilter_shim 0x418000e30000 .data 0x417fcf400000 .bss 0x417fcf4009f0

2019-11-06T03:16:45.603Z cpu0:2097414)vmkapi_v2_3_0_0_dvfilter_shim 0x418000e31000 .data 0x417fcf800000 .bss 0x417fcf8009e8

2019-11-06T03:16:45.603Z cpu0:2097414)esxfw 0x418000e32000 .data 0x417fcfc00000 .bss 0x417fcfc16c40

2019-11-06T03:16:45.603Z cpu0:2097414)crypto_fips 0x418000e4d000 .data 0x417fd0000000 .bss 0x417fd00019a0

2019-11-06T03:16:45.603Z cpu0:2097414)dvfilter-generic-fastpath 0x418000e7b000 .data 0x417fd0400000 .bss 0x417fd04162b0

2019-11-06T03:16:45.603Z cpu0:2097414)vmkibft 0x418000e9c000 .data 0x417fd0800000 .bss 0x417fd08039c0

2019-11-06T03:16:45.603Z cpu0:2097414)vmkfbft 0x418000ea0000 .data 0x417fd0c00000 .bss 0x417fd0c02b60

2019-11-06T03:16:45.603Z cpu0:2097414)vfat 0x418000ea3000 .data 0x417fd1000000 .bss 0x417fd1002820

2019-11-06T03:16:45.603Z cpu0:2097414)lvmdriver 0x418000eaf000 .data 0x417fd1400000 .bss 0x417fd1403a40

2019-11-06T03:16:45.603Z cpu0:2097414)deltadisk 0x418000ed0000 .data 0x417fd1800000 .bss 0x417fd1807ec0

2019-11-06T03:16:45.603Z cpu0:2097414)vdfm 0x418000f0b000 .data 0x417fd1c00000 .bss 0x417fd1c001c0

2019-11-06T03:16:45.603Z cpu0:2097414)gss 0x418000f10000 .data 0x417fd2000000 .bss 0x417fd2002b18

2019-11-06T03:16:45.603Z cpu0:2097414)vmfs3 0x418000f38000 .data 0x417fd2400000 .bss 0x417fd2407c40

2019-11-06T03:16:45.603Z cpu0:2097414)sunrpc 0x418001064000 .data 0x417fd2800000 .bss 0x417fd2803b40

2019-11-06T03:16:45.603Z cpu0:2097414)vmklink_mpi 0x418001082000 .data 0x417fd2c00000 .bss 0x417fd2c02600

2019-11-06T03:16:45.603Z cpu0:2097414)swapobj 0x418001088000 .data 0x417fd3000000 .bss 0x417fd30032f8

2019-11-06T03:16:45.603Z cpu0:2097414)nfsclient 0x418001092000 .data 0x417fd3400000 .bss 0x417fd3404340

2019-11-06T03:16:45.603Z cpu0:2097414)nfs41client 0x4180010b4000 .data 0x417fd3800000 .bss 0x417fd3805700

2019-11-06T03:16:45.603Z cpu0:2097414)vflash 0x418001124000 .data 0x417fd3c00000 .bss 0x417fd3c03740

2019-11-06T03:16:45.603Z cpu0:2097414)procMisc 0x418001130000 .data 0x417fd4000000 .bss 0x417fd4000000

2019-11-06T03:16:45.603Z cpu0:2097414)nrdma 0x418001131000 .data 0x417fd4400000 .bss 0x417fd4417fc0

2019-11-06T03:16:45.603Z cpu0:2097414)nrdma_vmkapi_shim 0x418001179000 .data 0x417fd4800000 .bss 0x417fd48012b8

2019-11-06T03:16:45.603Z cpu0:2097414)vmkapi_v2_4_0_0_rdma_shim 0x41800117a000 .data 0x417fd4c00000 .bss 0x417fd4c01370

2019-11-06T03:16:45.603Z cpu0:2097414)vmkapi_v2_3_0_0_rdma_shim 0x41800117b000 .data 0x417fd5000000 .bss 0x417fd5000fc0

2019-11-06T03:16:45.603Z cpu0:2097414)vmkapi_v2_3_0_0_nmp_shim 0x41800117d000 .data 0x417fd5400000 .bss 0x417fd5400df0

2019-11-06T03:16:45.603Z cpu0:2097414)vmkapi_v2_4_0_0_nmp_shim 0x41800117e000 .data 0x417fd5800000 .bss 0x417fd5800df0

2019-11-06T03:16:45.603Z cpu0:2097414)vmkapi_v2_2_0_0_nmp_shim 0x41800117f000 .data 0x417fd5c00000 .bss 0x417fd5c00d68

2019-11-06T03:16:45.603Z cpu0:2097414)vmkapi_v2_4_0_0_iscsi_shim 0x418001180000 .data 0x417fd6000000 .bss 0x417fd6001240

2019-11-06T03:16:45.603Z cpu0:2097414)vmkapi_v2_3_0_0_iscsi_shim 0x418001181000 .data 0x417fd6400000 .bss 0x417fd6400970

2019-11-06T03:16:45.603Z cpu0:2097414)vmkapi_v2_2_0_0_iscsi_shim 0x418001182000 .data 0x417fd6800000 .bss 0x417fd6800970

2019-11-06T03:16:45.603Z cpu0:2097414)vrdma 0x418001183000 .data 0x417fd6c00000 .bss 0x417fd6c02580

2019-11-06T03:16:45.603Z cpu0:2097414)balloonVMCI 0x4180011a4000 .data 0x417fd7000000 .bss 0x417fd7000000

2019-11-06T03:16:45.603Z cpu0:2097414)hbr_filter 0x4180011a5000 .data 0x417fd7400000 .bss 0x417fd7400300

2019-11-06T03:16:45.603Z cpu0:2097414)ftcpt 0x4180011d6000 .data 0x417fd7800000 .bss 0x417fd7802fc0

2019-11-06T03:16:45.603Z cpu0:2097414)filtmod 0x418001223000 .data 0x417fd7c00000 .bss 0x417fd7c04180

2019-11-06T03:16:45.603Z cpu0:2097414)svmmirror 0x418001235000 .data 0x417fd8000000 .bss 0x417fd8000100

2019-11-06T03:16:45.603Z cpu0:2097414)cbt 0x418001242000 .data 0x417fd8400000 .bss 0x417fd84000c0

2019-11-06T03:16:45.603Z cpu0:2097414)migrate 0x418001245000 .data 0x417fd8800000 .bss 0x417fd8805400

2019-11-06T03:16:45.603Z cpu0:2097414)vfc 0x4180012c6000 .data 0x417fd8c00000 .bss 0x417fd8c02cc0

Coredump to disk.

2019-11-06T03:16:45.654Z cpu0:2097414)Slot 1 of 1 on device t10.ATA_____SSDSC2BB120G7R____________________________PHDV7172046G150MGN:9.

2019-11-06T03:16:45.654Z cpu0:2097414)Dump: 474: Using dump slot size 2684354560.

2019-11-06T03:16:45.664Z cpu0:2097414)Dump: 2853: Using dump buffer size 98304

If we look at the info.txt file you made me create earlier:

\==+UserWorld :

|----World ID...........................................2097414

|----World Name.........................................VSCSIPoll

|----World Command Line.................................

|----Cartel ID..........................................0

|----Parent Cartel ID...................................0

|----Scheduler Group ID.................................0

|----World Group ID.....................................2097153

|----Cartel Group ID....................................0

|----Session ID.........................................0

|----World Flags........................................1

|----World State........................................WAIT

|----World Type.........................................S

|----World Security Domain..............................0

\==+Vcpu Id 2097414 Times :

|----Up Time......................................144878366847

|----Used Time....................................206028286

|----System time..................................0

|----Overlap time.................................502140

|----Run Time.....................................234501541

|----Wait Time....................................144430893153

|----Busy wait Time...............................0

|----Total Wait Time..............................144430893153

|----CoStop Time..................................0

|----Idle Time....................................0

|----Ready Time...................................212972153

|----Maxlimited Time..............................0

|----Vmk Time.....................................0

|----Guest Time...................................0

\==+VCPU Stats :

|----Swap Latency.................................0

|----Compress Latency.............................0

This is the relevant info there.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Do you set this device "Samsung_SSD_970_PRO_1TB" as the host's cache datastore device?

and tell me is that mentioned disk configured as the disk dump placement? Do you have enough free space on this datastore?

Can you change your disk dump placement on another larger space and tell the result ...

Undercity of Virtualization: What is the VMKernel Core Dump - Part I

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The NVME is used as a VMFS Datastore, not as a cache store.

I believe the default disk dump placement location is the Intel SSD. Both of these have plenty of space left on the device (at least half the space is unallocated).

I will have to look at changing the disk dump location though if the space is not adaquate. Of that 1 TB for the SamsungSSD datastore, there is maybe 400-500GB still available, and that should be sufficient space I would assume for esxi to perform a data dump. The other SSD's also should have sufficient space available as well.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

So if it's not related to disks of your datastores, PSOD can be related to any of the following issues:

1. In-Consistency of a specific advanced feature of physical CPU with ESXi version (I had this problem before, Hyper-Threading and ESXi 6.0 on Proliant DL380 G7)

2. In-Consistency with Memory Configuration (It must be less related because if you have this issue, you must encounter with this problem more earlier, but not bad to check the HCL again)

3. Any physical/logical problem in configured disk/datastore for the VMs and host as the cache/swap space for memory ballooning problem)

4. Using old firmware version in your physical server (If you don't upgrade it for a too long duration)

At last, if you can't find the real reason of your issue you should contact for technical support

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I ended up rolling back to 6.7 flat, as I can't recall if this occurred before or after I upgraded to 6.7u3.

Waiting to see if that makes a difference. If it does, then I know it is software related. If it doesn't, I can re-upgrade and begin testing hardware.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Unfortunately, today, after rolling back, I received another PSoD.

2019-11-10T06:08:27.043Z cpu2:2097656)ScsiDeviceIO: 2994: Cmd(0x459ab7868300) 0x2a, CmdSN 0x800e002f from world 2099954 to dev "t10.NVMe____Samsung_SSD_970_PRO_1TB_________________EA43B39156382500" failed H:0x0 D:0x28 P:0x0 Invalid sense data: 0x0

2019-11-10T06:08:27.043Z cpu2:2097656)0x0 0x0.

2019-11-10T06:08:27.043Z cpu2:2097656)ScsiDeviceIO: 2994: Cmd(0x459ab79b1f40) 0x2a, CmdSN 0x800e002f from world 2099954 to dev "t10.NVMe____Samsung_SSD_970_PRO_1TB_________________EA43B39156382500" failed H:0x0 D:0x28 P:0x0 Invalid sense data: 0x0

2019-11-10T06:08:27.043Z cpu2:2097656)0x0 0x0.

2019-11-10T06:08:27.043Z cpu2:2097656)ScsiDeviceIO: 2994: Cmd(0x459ab797c840) 0x2a, CmdSN 0x800e002f from world 2099954 to dev "t10.NVMe____Samsung_SSD_970_PRO_1TB_________________EA43B39156382500" failed H:0x0 D:0x28 P:0x0 Invalid sense data: 0x0

2019-11-10T06:08:27.043Z cpu2:2097656)0x0 0x0.

2019-11-10T06:08:27.043Z cpu2:2097656)ScsiDeviceIO: 2994: Cmd(0x459ab7877300) 0x2a, CmdSN 0x800e002f from world 2099954 to dev "t10.NVMe____Samsung_SSD_970_PRO_1TB_________________EA43B39156382500" failed H:0x0 D:0x28 P:0x0 Invalid sense data: 0x0

2019-11-10T06:08:27.043Z cpu2:2097656)0x0 0x0.

2019-11-10T06:08:27.043Z cpu2:2097656)ScsiDeviceIO: 2994: Cmd(0x459ab79a63c0) 0x2a, CmdSN 0x800e002f from world 2099954 to dev "t10.NVMe____Samsung_SSD_970_PRO_1TB_________________EA43B39156382500" failed H:0x0 D:0x28 P:0x0 Invalid sense data: 0x0

2019-11-10T06:08:27.043Z cpu2:2097656)0x0 0x0.

2019-11-10T06:08:27.043Z cpu2:2097656)ScsiDeviceIO: 2994: Cmd(0x459ab795d1c0) 0x2a, CmdSN 0x800e002f from world 2099954 to dev "t10.NVMe____Samsung_SSD_970_PRO_1TB_________________EA43B39156382500" failed H:0x0 D:0x28 P:0x0 Invalid sense data: 0x0

2019-11-10T06:08:27.043Z cpu2:2097656)0x0 0x0.

2019-11-10T06:08:27.043Z cpu1:2097691)nvme:NvmeCore_GetCmdInfo:895:Queue [1] Command List Empty, DumpProgress: Faulting world regs Faulting world regs (01/14)

DumpProgress: Vmm code/data Vmm code/data (02/14)

DumpProgress: Vmk code/rodata/stack Vmk code/rodata/stack (03/14)

DumpProgress: Vmk data/heap Vmk data/heap (04/14)

DumpProgress: PCPU PCPU (05/14)

2019-11-13T16:42:10.029Z cpu15:2097730)Dump: 3185: Dumped 1796 pages of recentMappings

DumpProgress: World-specific data World-specific data (06/14)

DumpProgress: VASpace VASpace (08/14)

2019-11-13T16:42:12.101Z cpu15:2097730)HeapMgr: 1021: Dumping HeapMgr region for pageSize 0 with 54638 PDEs.

2019-11-13T16:42:20.673Z cpu15:2097730)HeapMgr: 1021: Dumping HeapMgr region for pageSize 0 with 0 PDEs.

2019-11-13T16:42:20.673Z cpu15:2097730)XMap: 479: Dumping XMap region with 16384 PDEs.

2019-11-13T16:42:25.350Z cpu15:2097730)VAArray: 799: Dumping VAArray region

2019-11-13T16:42:25.354Z cpu15:2097730)Timer: 1420: Dumping Timer region with 8 PDEs.

2019-11-13T16:42:25.416Z cpu15:2097730)FastSlab: 1062: Dumping FastSlab region with 32768 PDEs.

2019-11-13T16:42:33.188Z cpu15:2097730)MPage: 707: Dumping MPage region

2019-11-13T16:42:49.325Z cpu15:2097730)VAArray: 799: Dumping VAArray region

2019-11-13T16:42:49.370Z cpu15:2097730)PShare: 3180: Dumping pshareChains region with 2 PDEs.

2019-11-13T16:42:50.185Z cpu15:2097730)VASpace: 1218: VASpace "WorldStore" [451a00000 - 459a00001] had no registered dump handler.

2019-11-13T16:42:50.185Z cpu15:2097730)VASpace: 1218: VASpace "vmkStats" [45aa40000 - 45ca40000] had no registered dump handler.

2019-11-13T16:42:50.185Z cpu15:2097730)VASpace: 1218: VASpace "pageRetireBitmap" [45ca40000 - 45ca40204] had no registered dump handler.

2019-11-13T16:42:50.185Z cpu15:2097730)VASpace: 1218: VASpace "pageRetireBitmapIdx" [45ca80000 - 45ca80001] had no registered dump handler.

2019-11-13T16:42:50.185Z cpu15:2097730)VASpace: 1218: VASpace "llswap" [45cac0000 - 465b40000] had no registered dump handler.

2019-11-13T16:42:50.185Z cpu15:2097730)VASpace: 1218: VASpace "LPageStatus" [465b40000 - 465b40041] had no registered dump handler.

2019-11-13T16:42:50.185Z cpu15:2097730)Migrate: 394: Dumping Migrate region with 65536 PDEs

2019-11-13T16:42:52.881Z cpu15:2097730)VASpace: 1218: VASpace "XVMotion" [467b80000 - 467ba0000] had no registered dump handler.

DumpProgress: PFrame PFrame (09/14)

2019-11-13T16:42:52.901Z cpu15:2097730)PFrame: 5499: Dumping PFrame region with 33018 PDEs

DumpProgress: Dump Files Dump Files (12/14)

DumpProgress: Collecting userworld dumps Collecting userworld dumps (13/14)

DumpProgress: Finalized dump header Finalized dump header (14/14)

A photo can be found below:

I will start troubleshooting each device individually (memtest for memory), CPU stress tests, verifying firmware (I did upgrade the Firmware BIOS but it is not possible to downgrade this as far as I know).

If all else fails, I will start replacing components unfortunately, or pay for a support contract.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If the PSoD's started to appear after the update of the BIOS, that may be the exact reason, if not, i'd completely strip the host of all of the resource to a minimum before troubleshooting the hardware.